Low-Dimensional, Auditory Feature Vectors that Improve VTL Normalization in Automatic Speech Recognition

From CNBH Acoustic Scale Wiki

The syllables of speech contain information about the vocal tract length (VTL) of the speaker as well as the glottal pulse rate (GPR) and the syllable type. Ideally, the pre-processor used for automatic speech recognition (ASR) should segregate syllable type information from the VTL and GPR information. The auditory system appears to perform this segregation, and this may well be why human speech recognition (HSR) is so much more robust than automatic speech recognition. This paper compares robustness of two types of feature vectors with regard to the recognition performance they support: feature vectors based on Mel-Frequency Cepstral Coefficients (MFCCs), which are the traditional feature vectors of ASR, and a new form of feature vector inspired by the excitation patterns produced by speech sounds in the auditory system. The two types of feature vector were evaluated using a fairly traditional syllable recognizer based on a Hidden-Markov-Model of syllable production. The speech stimuli were syllables scaled with the vocoder STRAIGHT to have a wide range of vocal tract lengths (VTLs) and glottal pulse rates (GPRs). For both recognizers, training took place with stimuli from a small central range of scaled values. When tested on the full range of scaled syllables, average performance for MFCC-based recognition was just 73.5 %, with performance falling close to 0 % for syllables with extreme VTL values. The feature vectors produced using the auditory model led to much better performance; the average for the full range of scaled syllables was about 91 %, and performance never fell below 65% even for extreme combinations of VTL and GPR. Moreover, the auditory feature vectors contain just 12 features whereas the standard MFCC vectors contain 39 features.

The text and figures are from a report arising from an EOARD project (FA8655-05-1-3043) entitled Measuring and Enhancing the Robustness of Automatic Speech Recognition. An abbrievated version of this paper appears in the proceedings for Acoustics2008.

Roy Patterson , Jessica Monaghan , Tom Walters and Christian Feldbauer

Contents

|

INTRODUCTION

When an adult and a child say the same sentence, the information content is the same, but the waveforms are very different. Adults have longer vocal tracts and heavier vocal cords than children. Despite these differences, humans have no trouble understanding speakers with varying Vocal Tract Lengths (VTLs) and Glottal Pulse Rates (GPRs); indeed, Smith et al. (2005) showed that both VTL and GPR could be extended far beyond the ranges found in the normal population without a serious reduction in recognition performance. This robustness of Human Speech Recognition (HSR) stands in marked contrast to that of Automatic Speech Recognition (ASR), where recognizers trained on an adult male do not work for women, let alone children. GPR and VTL are primarily properties of the source of the sound at the syllable level in speech communication, quite separate from the information that determines syllable type. The microstructure of the waveform reveals a stream of glottal pulses each followed by a complex resonance showing the composite action of the vocal tract above the larynx on the pulses as they pass through it. The resonances of the vocal tract are known as formants and they determine the envelope of the short-term magnitude spectrum of speech sounds. The formant peak frequencies are determined by VTL and vocal tract shape. As a child grows into an adult, the formants of a given vowel decrease in inverse proportion to VTL, and this is the form of VTL information in the magnitude spectrum (Patterson et al., 2006). There is a strong correlation between height and VTL in humans which has recently been documented using magnetic resonance imaging (MRI) (Fitch and Giedd 1999). This paper is concerned with the robustness of ASR when presented with speakers of widely varying sizes; that is, how the performance of ASR systems varies as the spectra of speech sounds expand or compress in frequency with changes in speaker size. ASR requires a compact representation of speech sounds for both the training and recognitions stages of processing, and traditionally ASR systems use a frame-based spectrographic representation of speech to provide a sequence of ‘feature vectors’. Ideally the construction of the feature vectors should involve segregating the syllable type information from the speaker-size information (GPR and VTL), and the removal of the size information from the feature vectors. In theory, this would help make the recognizer robust to variation in speaker size. The effect of source size on the speech waveform can be modeled by considering the impulse response of a scaled filter. If lr is the VTL of a reference speaker, the VTL of a test speaker can be represented by l=αlr, where α is the relative size. If the impulse response of the vocal tract of the reference speaker is hr(t), then the impulse response of the vocal tract of the test speaker is h(t)=hr(t/α). Similarly, in the frequency domain, H(f)=Hr(αf). When plotted on a logarithmic frequency scale the frequency dilation produced by a change in speaker size becomes a linear shift of the spectrum, as a unit, along the axis – towards the origin as the speaker increases in size.

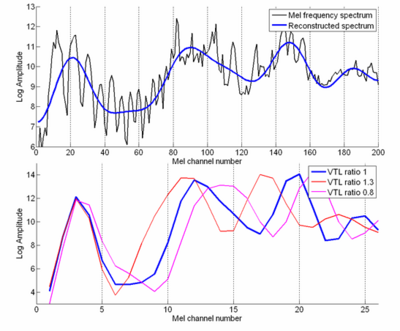

Feature vectors constructed from low-order MFCCs

Most commercial ASR systems use Mel-Frequency Cepstral Coefficients (MFCCs) as the features in their vectors because they are believed to represent speech information well, and they are robust to background noise. A Mel-Frequency, Cepstral Coefficient is the amplitude of a cosine function fitted to a spectral frame of a sound (plotted in quasi-log-frequency, log-magnitude coordinates). The MFCC feature vector is computed in a sequence of steps. (1) A temporal window is applied to the sound and a fast Fourier transform is performed on this windowed signal. (Note that the window position is stepped regularly along in time without regard for the timing of the glottal pulses.) (2) The spectrum is mapped onto the mel-frequency scale using a triangular filter-bank; the mel-frequency scale is a quasi-logarithmic scale, similar to Fletcher’s ‘critical band’ scale or the ERB scale (Glasberg and Moore, 1990). (3) Spectral magnitude is converted to the logarithm of spectral magnitude. (4) A Discrete Cosine Transform (DCT) is applied to the mel-frequency log-magnitude spectrum. The MFCCs are the coefficients of this cosine series expansion, or ‘cepstrum’. (5) The first twelve, or so, of these cepstral coefficients form the feature vector; the remaining higher-order coefficients are discarded. The discarding of the higher-order cepstral coefficients has the effect of smoothing the mel-frequency spectrum as the feature vector is constructed. The process is illustrated in Fig. 1. The black line in panel (a) shows the mel-frequency spectrum of an /i/ vowel spoken by an adult male of average height. The spectrum was constructed with a 200-channel mel-frequency ‘filterbank’, so it reveals the frequency fine structure, and shows, for example, the harmonic structure of this voiced vowel in the region below about channel 80. (The filterbank does not contain filters in the usual sense; the term ‘filter’ just refers to the weighting functions that average local spectral components into a mel-frequency spectral component.) The bold blue line presents a smoothed version of the mel-frequency spectrum rather like its envelope; it was produced by inverse cosine-transforming the first 12 coefficients of the cepstral sequence. The blue spectrum shows the spectral information that the feature vector would contain if it were based on a high-resolution mel-frequency spectrum like that shown by the black line in panel (a). The peaks in the smoothed spectrum (blue line) provide estimates of the formant frequencies of the vocal tract. Note that none of the spectral components in the region of the first formant provides a good estimate of the formant frequency on its own. The bold, blue line in the lower panel of Fig. 1 shows the summary of the mel-frequency spectrum of the /i/ vowel as it is encoded in the standard MFCC feature vector that is used for speech recognition. This standard feature vector consists of the lowest 12 MFCCs calculated from a 26-channel mel-frequency ‘filterbank’ (rather than the 200-channel ‘filterbank’ used in the upper panel); the summary spectrum was created by performing an inverse cosine-transform on this feature vector. It shows that the MFCC feature vector encodes much of the formant-position information of the vowel; the peaks in the mel-frequency spectrum (Fig. 1, lower panel) are in approximately the same positions as the peaks in the mel-frequency spectra in the upper panel. The removal of the higher-order coefficients from the cepstral sequence effectively removes the fine structure of the mel-frequency spectrum, including most of the pitch information, that is, the spacing of the harmonics in the magnitude spectrum. The log of the energy of the un-normalized spectrum was included in the feature vector, as is traditional with HMM recognizers, so the vector has 13 dimensions in ASR terms.

The coding of VTL information in MFCC feature vectors

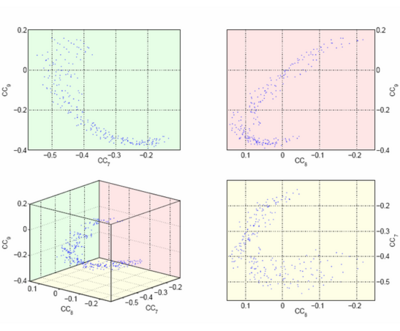

The mel-frequency spectra constructed from two other versions of the /i/ vowel are presented in the lower panel of Fig. 1 by the magenta and red lines. They are scaled versions of the vowel produced with a vocoder to produce sounds that represent the /i/ vowels of (i) a speaker with a vocal tract 0.8 times that of the starting vowel (magenta line) and (ii) a speaker with a vocal tract 1.3 times that of the starting vowel (red line). The three vowels all have the same GPR in this example (165 Hz). In the region above about channel 5, the mel-frequency spectra of the scaled vowels are shifted versions of the mel-frequency spectra of the original vowel, and the shift is essentially linear, as would be expected on this quasi-logarithmic scale (mel channel number). This shows that the MFCC feature vectors contain both the information that the vowel is an /i/, along with the speaker size information (the position of the distribution along the channel dimension; that is, the representation is to some degree ‘scale-shift-covariant’ (Patterson, van Dinther and Irino, 2007). Moreover, it appears as if it would be reasonably easy to separate the vowel type information from the VTL information, in the region above channel 5. Most existing techniques for VTL normalization (VTLN) of MFCC feature vectors involve attempts to counteract the dilation of the magnitude spectrum by warping the mel ‘filters’ (the weighting functions that convert the magnitude spectrum of the vowel into a mel-frequency spectrum) prior to the production of the cepstral coefficients. Unfortunately, the process of finding the value of the relative size parameter is computationally expensive, and must be done individually for each new speaker. The problem is that the relationship between the value of a specific MFCC and VTL is complicated, and a change in VTL typically leads to substantial changes in the values of all of the MFCCs. In other words, the individual cepstral coefficients all contain a mixture of syllable-type information and VTL information, and it is very difficult to segregate the information once it is mixed in this way. As a result, the MFCCs do not themselves effect segregation of the two types of information. The segregation and normalization problems are left to the recognizer that operates on the feature vectors, and it is this which limits the robustness of ASR to changes in speaker size when it is based on an HMM recognizer operating on MFCC feature vectors. The problem the recognizer faces is illustrated in Fig. 2, which shows the feature space formed by three of the cepstral coefficients (the 7th, 8th, and 9th) for the vowel /i/; these features carry a substantial portion of the information in the vowel. The lower left-hand panel shows an isometric view of the space, while the other three panels show projections onto the three marginal planes of the space. Although the mel-frequency spectrum shifts in an approximately linear way as the acoustic scale of the vowel is manipulated (Fig. 1, lower panel), this shift is not encoded in a simple way in the cepstral coefficients. As the acoustic scale of the vowel is varied from that associated with a small child (0.8) to that associated with a large male (1.3), the locus of the intersection of the cepstral coefficients proceeds along an arc from the lower right-hand end to the upper right-hand end, with women in the central region where the locus reverses direction. A recognizer trained on the /i/ vowels of an adult male is unlikely to recognize the /i/ of a small woman or a child as being within its cluster. This is probably the reason that ASR based on MFCC feature vectors is far less robust than HSR, and the reason that the main success of ASR is systems that are trained on a specific speaker, and used solely for that speaker. On reflection, it seems odd to use cepstral coefficients generated with a discrete cosine transform as features in a system where the sounds are known to vary in acoustic scale. As illustrated in Fig. 1, for a given vowel, a change in acoustic scale (or VTL) results in a shift of the spectrum, as a unit, on a logarithmic frequency axis. One of the main uses of the Fourier transform is to segregate distribution shape information from distribution position information; it converts the shape information into coefficient magnitude information and the position information into coefficient phase information. So, we might expect that the MFCCs would be based on the magnitude of the components of a complex Fourier transform since they would be, at least theoretically, scale-shift invariant. But instead, the MFCCs are generated with a cosine transform which specifically prohibits the basis function from shifting with acoustic scale. That is, a cosine maximum which fits a formant peak for a vowel from a given vocal tract with a specific length, cannot shift to follow the formant peak as it shifts with VTL; the maximum for a given cosine component occur at multiples of one specific frequency and they cannot change. As a result, a change in speaker size leads to a change in the magnitude of all of the cepstral coefficients when a change in the phase of the basis functions would provide a more consistent representation of syllable type. The size information is still present in the MFCCs, but it is not easily accessible and a very large training database would be needed to accurately model it.

It also seems odd to use a basis function which oscillates regularly, like a sinusoid, to represent the spectrum of a vowel which is known to consist of a small number of irregularly-spaced peaks; the resulting coefficients cannot provide a simple representation of the stimuli they are intended to encode. It seems that the main advantage of using MFCCs is, in fact, derived from the spectral smoothing achieved by the low-pass filtering of the cepstrum (i.e., restricting the feature vector to the lowest 12 of the cepstral coefficients). It would seem preferable to use features extracted by fitting Gaussians to the formant peaks; Stuttle and Gales (2001) demonstrated the advantage of Gaussian features for single speaker systems. Intuitively, Gaussian features should be largely invariant to changes in acoustic scale in vowel sounds, and so, in principle, a recognition system based on these features should be more robust to changes in speaker size. The superior robustness of HSR suggests that auditory processing is based on some form of scale transform (Cohen, 1993) rather than a Fourier transform, and that the scale transform automatically segregates vowel type information from speaker size information, as suggested by Irino and Patterson (2002). This, in turn, suggests that we should consider basing the features for the speech recognizer on auditory processing.

Feature Vectors from the Auditory Image Model

The Auditory Image Model (AIM) (Irino and Patterson 2002) simulates the general auditory processing that is applied to speech sounds, like any other sounds, as they proceed up the auditory pathway to the speech specific processing centers in the temporal lobe of cerebral cortex. AIM produces a pitch invariant, size covariant representation of sounds referred to as the Size Shape Image (SSI). This representation includes a simulation of the normalization for acoustic scale that is assumed to take place in the perception of sound by humans. The SSI is a 2-D representation of sound – ‘auditory filter frequency on a quasi-logarithmic (ERB) axis’ by ‘time-interval within the glottal cycle.’ However, the features produced in the representation by speech sounds are largely confined to a small band of channels, so the SSI can be summarized by its spectral profile (Patterson et al., 2007), and the profile has the same scale-shift covariance properties as the SSI itself.

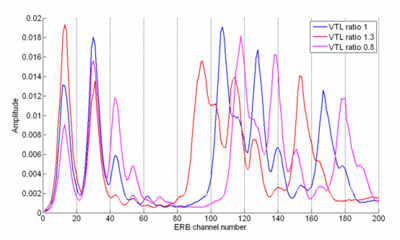

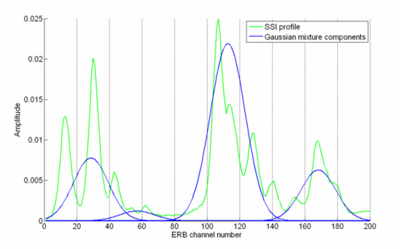

The SSI profiles produced by the three /i/ vowels described above are shown in Fig. 3; the color code is the same as for the mel-frequency spectra shown in the lower panel of Fig. 1. They are like excitation patterns (Glasberg and Moore, 1990), or auditory spectra, and the figure shows that distribution associated with the vowel /i/ shifts along the axis with acoustic scale. Thus, the transformations performed by the auditory system produce segregation of the complementary features of speech sounds; that is, the information about the size of the speaker, and the size invariant properties of speech sounds, like vowel type. In this way the transformations simulate the neural processing of size information in speech by humans. Experiments show that speaker size discrimination and vowel recognition performance are related: when discrimination performance is good, vowel recognition performance is good (Smith et al. 2005). This suggests that recognition and size estimation take place simultaneously. It is assumed that the acoustic scale information is essentially VTL information, and that it is used to evaluate speaker-size, and that the normalized shape information facilitates speech recognition and makes the recognition processing robust. The dimensionality of the SSI profile is too large to be used as the input for a speech recognizer. In section II.5, we demonstrate how the information content can be summarized with a mixture of four Gaussians to produce a four dimensional feature vector. The remainder of this paper shows how to construct this feature vector and how to use it as input for an auditory HMM recognizer. The performance of this bio-acoustically motivated recognizer is compared with that of an HMM recognizer using traditional MFCCs to demonstrate the greater robustness of feature vectors constructed from auditory principles.

METHODS, ASSUMPTIONS AND PROCEDURES

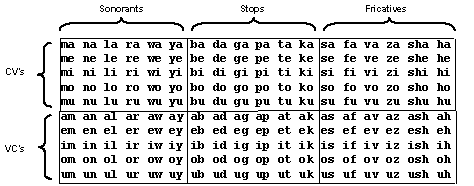

Structured, syllable corpus

The corpus was compiled by Ives et al. (2005) who used phrases of four syllables to investigate VTL discrimination and show that the just-noticeable difference (JND) in speaker size is about 5 %. There were 180 syllables in total, composed of 90 pairs of consonant-vowel (CV) and vowel-consonant (VC) syllables, such as ‘ma’ and ‘am’. There were three consonant-vowel (CV) groups and three vowel-consonant (VC) groups as shown in Table I. Within the CV and VC categories, the three groups were distinguished by consonant category: sonorants, stops, or fricatives. So, the corpus is a balanced set of simple syllables in CV-VC pairs, rather than a representative sample of syllables from the English language. The vowels were pronounced as they are in most five vowel languages, like Japanese and Spanish, so that they could be used with a wide range of listeners. A useful mnemonic for the pronunciation is the names of the notes of the diatonic scale, “do, re, mi, fa, so” with “tofu” for the /u/. For the VC syllables involving sonorant consonants (e.g., oy), the two phonemes were pronounced separately rather than as a diphthong.

The syllables were recorded from one speaker (author RP) in a quiet room with a Shure SM58-LCE microphone. The microphone was held approximately 5 cm from the lips to ensure a high signal to noise ratio and to minimize the effect of reverberation. A high-quality PC sound card (Sound Blaster Audigy II, Creative Labs) was used with 16-bit quantization and a sampling frequency of 48 kHz. The syllables were normalized by setting the RMS value in the region of the vowel to a common value so that they were all perceived to have about the same loudness.

Scaling the syllable corpus

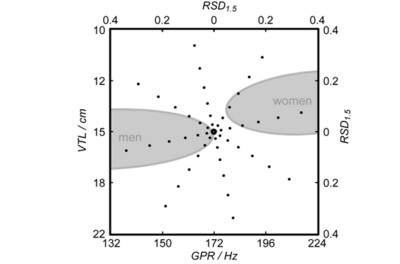

Once the syllable recordings were edited and standardized, a vocoder referred to as STRAIGHT (Kawahara and Irino, 2005) was used to generate all the different ‘speakers,’ that is, versions of the corpus in which each syllable was transformed to have a specific combination of VTL and GPR. STRAIGHT uses the classical source-filter theory of speech Dudley (1939) to segregate GPR information from the spectral-envelope information associated with the shape and length of the vocal tract. STRAIGHT produces a pitch-independent spectral envelope that accurately tracks the motion of the vocal tract throughout the syllable. Subsequently, the syllable can be resynthesized with arbitrary changes in GPR and VTL; so for example, the syllable of a man can be readily transformed to sound like a women or a child. The vocal characteristics of the original speaker, other than GPR and VTL, are preserved by STRAIGHT in the scaled syllable. Syllables can also be scaled well beyond the normal range of GPR and VTL values encountered in everyday speech and still be recognizable (e.g., Smith et al., 2005). The central speaker in both the HSR and ASR studies was assigned GPR and VTL values on the line between the average adult man and adult woman on the GPR-VTL plane, and mid-way between the adult man and woman, where both the GPR and VTL dimensions are logarithmic. Peterson and Barney (1952) reported that the average GPR of men is 132 Hz, while that of women is 223 Hz, and Fitch and Giedd (1999) reported that the average VTLs of men and women were 155.4 and 138.8 mm, respectively. Accordingly, the central speaker was assigned a GPR of 171.7 Hz and a VTL of 146.9 mm. For scaling purposes, the VTL of the original speaker was taken to be 165 mm.

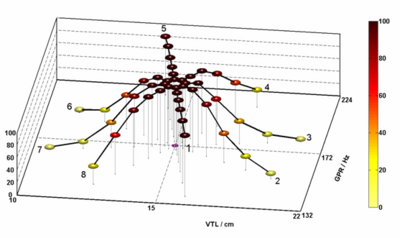

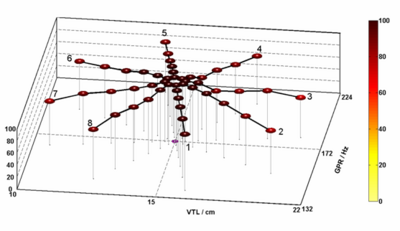

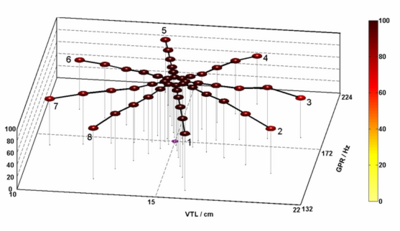

A set of 56 scaled speakers were produced with STRAIGHT in the region of the GPR-VTL plane surrounding the central speaker, and each speaker had one of the combinations of GPR and VTL illustrated by the points on the radial lines of the GPR-VTL plane in Fig. 3. There are seven speakers on each of eight spokes. The ends of the radial lines form an ellipse whose minor radius is four semi-tones in the GPR direction and whose major radius is six semi-tones in the VTL dimension; that is, the GPR radius is 26 % of the GPR of the central speaker (4 semi-tones = = 26 %), and the VTL radius is 41% of the VTL of the training speaker (6 semi-tones = = 41 %). The step size in the VTL dimension was deliberately chosen to be 1.5 times the step size in the GPR direction because the JND for speaker size is larger than that for pitch. The seven speakers along each spoke are spaced logarithmically in this log-log, GPR-VTL plane. The spoke pattern was rotated anti-clockwise by 12.4 degrees so that there was always variation in both GPR and VTL when the speaker changes. This angle was chosen so that two of the spokes form a line coincident with the line that joins the average man with the average woman in the GPR-VTL plane. The GPR-VTL combinations of the 56 different scaled speakers are presented in Table II.

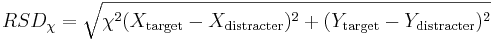

The important variable in both the ASR and the HSR experiments is the distance along a spoke from the reference voice at the center of the spoke pattern to a given test speaker. The distance is referred to as the Radial Scale Distance (RSD). It is the geometrical distance from the reference voice to the test voice, which is

where X and Y are the coordinates of GPR and VTL, respectively, in this log-log-space, and χ is the GPR-VTL trading value, 1.5. The speaker values were positioned along each spoke in logarithmically increasing steps on these logarithmic co-ordinates. The seven RSD values were 0.0071, 0.0283, 0.0637, 0.1132, 0.1768, 0.2546, and 0.3466. There are eight speakers associated with each RSD value, and their coordinates form ellipse in the logGPR-logVTL plane. The seven ellipses are numbered from one to seven, beginning with the innermost ellipse, with an RSD value of 0.0071.

| Spoke | Point: | 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 1 | GPR (Hz) | 170.9 | 168.6 | 164.7 | 159.5 | 153.0 | 145.5 | 137.0 |

| VTL (cm) | 14.7 | 14.8 | 14.9 | 15.1 | 15.3 | 15.5 | 15.8 | |

| 2 | GPR (Hz) | 171.3 | 170.0 | 167.8 | 164.9 | 161.1 | 156.7 | 151.6 |

| VTL (cm) | 14.8 | 15.0 | 15.5 | 16.2 | 17.0 | 18.2 | 19.7 | |

| 3 | GPR (Hz) | 171.9 | 172.4 | 173.3 | 174.5 | 176.1 | 178.1 | 180.4 |

| VTL (cm) | 14.8 | 15.1 | 15.6 | 16.4 | 17.5 | 18.8 | 20.6 | |

| 4 | GPR (Hz) | 172.4 | 174.5 | 178.0 | 183.0 | 189.6 | 198.1 | 208.6 |

| VTL (cm) | 14.7 | 14.9 | 15.2 | 15.6 | 16.2 | 16.8 | 17.7 | |

| 5 | GPR (Hz) | 172.5 | 174.9 | 179.0 | 184.8 | 192.7 | 202.7 | 215.2 |

| VTL (cm) | 14.7 | 14.6 | 14.5 | 14.3 | 14.1 | 13.9 | 13.6 | |

| 6 | GPR (Hz) | 172.1 | 173.5 | 175.7 | 178.8 | 183.0 | 188.1 | 194.5 |

| VTL (cm) | 14.6 | 14.3 | 13.9 | 13.4 | 12.7 | 11.9 | 11.0 | |

| 7 | GPR (Hz) | 171.5 | 171.0 | 170.1 | 168.9 | 167.4 | 165.6 | 163.4 |

| VTL (cm) | 14.6 | 14.3 | 13.8 | 13.2 | 12.4 | 11.5 | 10.5 | |

| 8 | GPR (Hz) | 171.0 | 169.0 | 165.7 | 161.1 | 155.5 | 148.8 | 141.3 |

| VTL (cm) | 14.6 | 14.5 | 14.2 | 13.8 | 13.4 | 12.8 | 12.2 |

Table II. Vocal characteristics of the distracter voice. Point refers to the position on the spokes in Figure 1 ascending outwards from the reference speaker in the centre.

The Hidden-Markov Model Toolkit (HTK)

There is a software package referred to as the HMM Tool Kit (HTK) (Young et al., 2006) which can be used as a platform to produce recognizers. HTK models speech as a sequence of stationary segments, or frames, produced by a Hidden Markov Model (HMM). For an isolated syllable recognizer one HMM is used to model the production of each syllable. A database of wave files is converted to HTK files consisting of frames of feature vectors such as MFCCs. These HTK files are labeled by syllable and the parameters of the syllable models such as the output distribution and the transition probability of each state are estimated from a subsection of the database of HTK files. The rest of the database is used in the testing stage. Here the most probable HMM that produced each file is found, and it is assigned the syllable corresponding to that HMM as its transcription. The transcriptions generated are then compared to the true transcription or ‘labels’ of the files and a recognition score is calculated. (The operation of HTK is described in greater detail in the report “Establishing Norms for the Robustness of Automatic Speech Recognition”). In all of the experiments in this paper, the HMM recognizers were trained on the reference speaker of the scaled-syllable database, and the voices of ellipse number one, that is, the eight speakers closest to the reference speaker in the GPR-VTL plane. This procedure was intended to imitate the training of a standard, speaker-specific ASR system, which is trained on a number of utterances from a single speaker. The eight adjacent points provided the small degree of variability needed to produce a stable hidden Markov model for each syllable. The recognizers were then tested on all of the scaled speakers, excluding those used in training, to provide an indication of their relative performance. An HMM with 3 emitting states was used initially for all the recognizers, which is suitable for the duration of a single syllable. The HMM topology was varied and an optimal recognition value was found for each recognizer.

The Auditory Image Model (AIM) as a pre-processor for speech recognition

AIM-C is an implementation of the Auditory Image Model (AIM) in C++. It was used in this project to develop an auditory front-end for speech recognition. AIM-C uses five functions to analyze a wave file, simulating the following processes in the auditory system:

- Basilar Membrane Motion (BMM)

- The Neural Activity Pattern (NAP) produced in the auditory nerve

- The strobe times required to construct the auditory image

- The Stabilised Auditory Image (SAI)

- The Size Shape image (SSI) and its profiles.

The Basilar membrane is modeled as a gammatone filter-bank with many channels; the impulse response has a gamma envelope and a sinusoidal carrier. The period of the carrier and the envelope width decrease in inverse proportion to the center frequency of the filter. The filter center frequencies are evenly distributed on a quasi-logarithmic frequency scale (Glasberg and Moore, 1990), and there are typically about 75 filters covering the speech range (from 100 to 6000 Hz). The filter-bank produces what is essentially a wavelet transform of the sound, with a gammatone kernel, and this is AIM’s representation of basilar membrane motion (BMM). In the current study a gammatone filterbank with 200 channels was used to ensure that recognition performance was not limited by the resolution of the initial spectral transform. The center frequencies of the filters ranged from 86 to 16000 Hz. Power-law compression with an exponent of 0.8 was applied to profile magnitude. The BMM is transformed into a neural activity pattern (NAP) in the cochlea by the action of the outer and inner hair cells along the basilar membrane. The outer hair cells amplify the low-level sound that enters the cochlea. The inner hair cells convert the sound wave into neural pulses. Half-wave rectification is used to make the response to the BMM uni-polar like the response of the hair cell, while keeping it phase-locked to the peaks in the wave. Low-pass filtering can be used at this stage to simulate the progressive loss of phase-locking at higher frequencies, but it is switched off by default in AIM-C. A spectral profile may be produced from the NAP by integrating the representation over time. The NAP is converted into a stabilized auditory image (SAI) using strobed temporal integration (STI). The lower limit of pitch perception in humans is approximately 30 Hz, and pitches above 30 Hz are perceived as continuous sounds (Pressnitzer et al., 2001). This indicates that temporal integration occurs before the perception of sound and in AIM, it is part of the image construction process. A ‘strobing’ algorithm is used to mark times of significant activity in the NAP, such as glottal pulses. When a strobe point is located in a particular channel of the filter-bank, the next 33 ms of activity of the channel is added to the auditory image buffer for that channel. This time interval is based on the lower limit of pitch. When the next strobe point is reached, it also causes activity to be added to the image buffer. The buffer contents decay over time to simulate the way the perception fades when the sound stops. Temporal integration occurs between these strobed points. Communication sounds, both in humans and animals, are almost always pulse resonance sounds, that is, the sounds produced by source-filter systems. It seems likely that the auditory system is optimized for sounds of this form. Strobing preserves the internal temporal fine-structure of these sounds as it phase locks to the glottal pulse and aligns the resonances attached to the pulses in the auditory image, much in the way an oscilloscope can be made to produces a stabilized view of a repetitious waveform. The array of buffers form a surface whose dimensions are channel number and time interval, and this is AIM’s representation of the stabilized auditory image that listeners hear in response to these sounds. The decay of the image is exponential with a 30 ms time constant and so the image shows a weighted sum of 5 to 10 glottal cycles, depending on the GPR, and it decays over about 100 ms when the sound ceases. The Size Shape image (SSI) is constructed from the information in the first cycle of the auditory image, taking the contents on the buffer from zero until the first glottal pulse ridge. The Size Shape Image is formed by scaling the time axis of the SAI by filter center frequency and converting to a logarithmic time-interval axis. The frequency scaling compensates for the contraction of the impulse response of the auditory filter as frequency increases. The vertical pitch cut-off line at the end of the cycle in the SAI becomes a diagonal line in the SSI due to the log time-interval transform. The position of the diagonal is proportional to center frequency and it intercepts the frequency axis at the GPR of the speaker. The SSI is a representation of our perception of the sound, referred to as our ‘Auditory Image’. This Auditory Image exists in a perceptual ‘space’ with dimensions of acoustic scale and impulse-response cycles (Patterson, van Dinther, and Irino, 2007). The axes of the SSI are quasi-log-scale and log-cycles. It is apparent that auditory perception is time shift covariant, as our perception of a particular sound does not differ if it is played at two reasonably separated times. This suggests that the cycles dimension is an extra dimension of auditory space, separate from time as we perceive it.

Summarizing the spectral profiles of AIM in low-dimensional feature vectors

The spectral profiles of the Auditory Image Model

In AIM, spectral profiles can be produced from both the NAP representation of sound and the SSI representation of sound; they have the same quasi-log-frequency dimension and they are both scale-shift covariant. Profiles from both the NAP and the SSI were used to produce feature vectors and the performance supported by the two forms of feature vectors was compared. Although the long-term spectra of these profiles are conceptually similar, the temporal integration employed to construct them is somewhat different. The NAP is a traditional time-frequency representation like the spectrogram and the spectral profiles are produced like the spectral vectors of the spectrogram. That is, the activity in each channel of the NAP is smoothed by low-pass filtering with a cut-off frequency of 100 Hz; then, the NAP is framed into 10-ms segments and the NAP profile is produced by integrating the activity across the frame. The NAP profile takes less time to compute than the SSI profiles, which could be a distinct advantage in some instances. Conceptually, however, the magnitude of the NAP profile is more variable because the temporal integration is not synchronized to the GPR. Two profiles can be defined from the orthogonal dimensions of the SSI (Patterson, van Dinther, and Irino, 2007): the spectral profile, formed by integrating along the cycles axis and the ‘temporal’ or cycles profile, formed by integrating along the frequency, or acoustic scale, axis. For a given speech sound, like a sustained vowel, a change in VTL does not change the form of the auditory activity in the SSI; the activity pattern merely moves vertically as a unit. The extent of the shift is essentially the logarithm of the ratio of the VTL of the sources. A change in pitch causes no change in the SSI other than a movement of the diagonal pitch cut-off line, which moves vertically while retaining the same gradient. This pitch line defines the extent of the Auditory Image but does not otherwise change its form. When GPR is increased, the pitch line shifts vertically upwards which attenuates the cycles profile with little effect on its shape. The spectral profile is also truncated as GPR increases since there is, by definition no activity below f0 in a periodic sound. As the activity pattern does not change with VTL or GPR, any changes that arise in the form of the SSI will be due to changes in the sound produced by changes in vocal tract shape. When the spectral profiles are shifted along the frequency axis by an amount proportional to the relative difference in VTL, the profiles will be overlaid. Any measure restricted to the form, or relative positions, of features in the profile will be invariant to changes in VTL. The profiles of consecutive frames of the SSI can be used to provide the sequence of one-dimensional feature vectors for an ASR system. The sequence of profiles functions like the spectrographic input of a traditional ASR system. The cycles profile of the SSI is theoretically scale-shift invariant, so it might improve robustness if it could be reduced to a small number of features that could be added to the feature vector. However, in the current study, the SSI feature vector is based solely on the spectral profile of the SSI. The SSI is a stabilized version of the repeating pattern produced by the stream of glottal pulses, so it can be sampled at any rate. In this study, it was sampled every 10 ms to match the frame rate of the NAP profile, and the frame rate of the MFCC feature vectors. In one condition of the study, the details of the profiles were smoothed as illustrated in the lower panel of Fig. 1, using a Discrete Cosine Transform (DCT); the high frequency components of the DCT were removed and the smoothed profile produced by inverse transforming the first 10 of the 200 DCT coefficients. The performance of the recognizer with these DCT-smoothed profiles was compared that produced when the smoothing was omitted.

Summarizing the formant frequency information of the profiles in a low-dimensional feature vector

The ‘expectation-maximization’ (EM) algorithm of Dempster, Laird, and Rubin (1977) was modified and used to fit a mixture of four Gaussians to each profile. The motivation for the use of a small number of Gaussian is, broadly speaking, as follows: The spectrum of a vowel, or a sonorant consonant, is assumed to contain three concentrations of energy that identify the three main resonances, or formants, of the vocal tract. The fourth formant is relatively close to the third in these profiles and so it is presumed that it does not warrant a separate Gaussian. When the profile is plotted as linear magnitude verses log-frequency, the formants have a roughly Gaussian shape, the width of the formants is not markedly different, and the energy spans a frequency range that is about four Gaussians wide. An example is presented in Fig. 5, which shows the SSI profile of an /i/ vowel (green) and the four Gaussians used to summarize it. Three of the Gaussians isolate the main concentrations of energy in the stimulus – the formants; the fourth Gaussian codes the fact that there is a large gap between the first and second formants in the case of the /i/ vowel. The illustration suggests that a reasonable feature vector would be something like the center frequencies, bandwidths, and peak magnitudes of these four Gaussians; this would produce a feature vector with 12 dimensions like the standard MFCC feature vector. The performance of the EM algorithm, however, suggested that 12 dimensions are too many. Although the EM algorithm always converges to a solution, when the fit has 12 degrees of freedom, the solution is excessively variable; the solution varies both between and within tokens of one vowel, in ways that indicate it is over fitting to unimportant details in individual profiles. One problem is that the fit sometimes fits very narrow Gaussians to individual harmonics at the low frequency end of the spectrum. To produce a consistent sequence of feature vectors, both for the vowels within syllables and the syllables themselves, it was necessary to reduce the number of parameters and modify the EM algorithm. Since the width of the formants is not markedly different on a log-frequency axis, the variance of the Gaussians was set to a single value (115 square-channels), eliminating four degrees of freedom. This also prevents the fit from using narrow Gaussians to fit individual harmonics and forces it to use one Gaussian in the region of the first formant as illustrated in Fig. 5. A preliminary experiment was performed with the vowel sounds only to determine a reasonable value for the fixed variance of the four Gaussians. The profiles were normalized to sum to unity and then treated as a probability density function, that is, four Gaussians (whose total area summed to unity) were fitted to the profile. It is this mixture of Gaussians which provides the model of the probability density function that is the basis of the feature vector. The features themselves were the weights of the four Gaussians, which since they sum to one can be summarized as three parameters. The log of the energy of the un-normalized profile was included as is traditional with HMM recognizers. Even without including information about the center frequencies of the Gaussians, performance was immediately higher than that achieved with the MFCC recognizer, and once the parameters were optimized, it increased to 100%. This prompted us to run the same experiment with this minimal feature vector on the complete syllable database, and the initial performance was again higher than the optimized MFCC performance. Occasionally, the fitting routine would assign two Gaussians with slightly different center frequencies to what appeared to be a single formant. Accordingly a cost constraint was included to prevent the fit from placing the means of the Gaussians too close together. With this constraint, and four Gaussians of the same width, the system typically used three Gaussians with large weights to fit formants and one Gaussian with a small weight that essentially coded the presence of a gap in the profile between two of the formants, or at one of the ends. It was observed that limiting the degree to which the Gaussians were allowed to overlap also made the basis functions more orthogonal. To further reduced variability in the fits associated with sensitivity to the initialization values, a two-stage fitting procedure was used. Before fitting, the profiles were normalized to sum to unity. There was an initialization step which involved fitting two Gaussians to the profile, and using the interval between the means of these Gaussians to provide an initial position for the four Gaussians in the second stage. It is this model that was used to produce the recognition results with the auditory pre-processor reported in the next section.

In summary, a four dimensional, auditory feature vector was produced using the logarithm of the energy of the original profile, and three of the Gaussian weights (the fourth being linearly dependent on the others). Experiments were also performed with larger feature vectors which included information about the formant frequencies; specifically, we added three features that were linear combination of the Gaussian means and which were designed to be scale invariant. These extended feature vectors did, indeed, improved performance, as would be expected, but the improvement was only just over one percent. First and second difference coefficients were computed between temporally adjacent feature vectors and added to the feature vector in all cases (as is standard in HMM recognizers). Thus, the length of the AIM feature vectors passed to the recognizer was 12 components (or dimensions), whereas it was 39 components (or dimensions) for the MFCC feature vectors. Having feature vectors with a lower dimensionality should reduce the time taken to run the training and recognition algorithms.

RESULTS AND DISCUSSION

HMM recognizer operating on MFCC feature vectors

In the initial experiment with the MFCC feature vectors, the recognizer was based on an HMM with a topology that had three emitting states and a single Gaussian output distribution for each state. The recognizer was trained on the original speaker and the eight speakers on the smallest ellipse nearest to the original speaker. The average recognition accuracy for this configuration, over the entire GPR-VTL plane, was only 65.0 %. There is no straightforward optimization technique for HMM topology. To ensure that the results were representative of HMM performance, a number of different topologies were trained and tested. Performance was maximized for an HMM topology consisting of four emitting states, with several Gaussian mixtures making up the output distributions for each emitting state. The number of training stages was also varied to avoid over-training. The optimum performance, using the best topology, was 73.5 % after nine iterations of the training algorithm HERest. A further experiment was carried out using MFCCs produced from a 200 channel mel ‘filterbank’ to check that the performance of the recognizer was not being limited by a lack of spectral resolution. The performance using these MFCCs was 67.7 % for the initial topology with three emitting states and 73.3 % using the best topology from the previous experiments, indicating that the resolution of the 26 channels is not a serious limitation. The performance for individual speakers, using this topology, is shown in Fig. 6. There is a central region adjacent to the training data for which performance is 100 %; it includes the second ellipse of speakers and several speakers along spokes 1 and 5 where VTL does not vary much from that of the reference speaker. As VTL varies further from the training values, performance degrades rapidly. This is particularly apparent in spokes three and seven, where recognition falls close to 0 % for the extremes, and to a lesser extent on spokes two, four, six and eight. This demonstrates that this MFCC recognizer cannot extrapolate beyond its training data to speakers with different VTL values. In contrast, performance remains consistently high along spokes 1 and 5, where the main variation is in GPR. This is not surprising since the process of extracting MFCCs eliminates most of the GPR information from the features. This figure shows the performance that sets the standard for comparison with the auditory feature vectors.

HMM recognizer operating on AIM feature vectors from the SSI profile

In the initial experiment with the SSI feature vectors, the recognizer was based on an HMM with a topology that had three emitting states and a single Gaussian output distribution for each state. So the recognition stage is effectively the same as for the MFCC recognizer. Similarly, the recognizer was initially trained on the original speaker and the eight speakers on the smallest ellipse nearest to the original speaker. The initial recognition rate using the SSI feature vectors was 84.6 % over the full range of speakers across the GPR-VTL plane; this is well above the initial performance with MFCC feature vectors. Performance was maximized for a HMM topology consisting of two emitting states, with several Gaussian mixtures making up the output distributions for each emitting state. The number of training stages was also varied to avoid over-training. After optimization of the topology and nine iterations of the training algorithm, HERest, the performance rose to 90.7 %, which is well above the 73.5 % achieve after similar optimization with the MFCC feature vectors. In this initial fit, the production of the feature vectors included DCT spectral smoothing. When the training and testing were rerun with the raw feature vectors (i.e., without spectral smoothing) performance was essentially unaltered, both in average performance and the performance associated with individual speakers.

Performance obtained using this topology for the individual speakers across the GPR-VTL plane, is shown in Fig. 7. As with the MFCC recognizer, performance is best along spokes one and five. However, unlike the MFCC recognizer, performance along most of the spokes is near ceiling after optimization. The worst performance, for the speaker at the end of spoke three, was 66.5 %, which compares with 3.8 % in the MFCC case. Average performance is limited to 90.7 % by a drop in performance at the extremes of spokes three and seven, although the drop is small in comparison to that seen in the MFCC case. The results indicate that there is still some sensitivity to VTL change in the AIM feature vectors. Since it affects only the extreme VTL conditions, it seems likely that it is due to edge effects at the fitting stage. That is, when a feature occurs near the edge of the spectrum, the tail of the Gaussian used to fit the spectral feature prevents it from shifting sufficiently to center the Gaussian on the feature. If this proves to be the reason, it suggests that performance is not limited by the underlying auditory representation (the SSI) but rather by a limitation in the feature extraction that should be amenable to improvement.

HMM recognizer operating on AIM feature vectors from the NAP profile

When the experiment was repeated using the spectral profile of the NAP to generate the feature vectors rather than the spectral profile of the SSI, the results were found to be marginally, although probably not significantly, better those achieved with the SSI profile. In the initial experiment with the NAP feature vectors, the recognizer was again based on an HMM with a topology that had three emitting states and a single Gaussian output distribution for each state. Similarly, the recognizer was initially trained on the original speaker and the eight speakers on the smallest ellipse nearest to the original speaker. Average performance for the recognizer operating on NAP feature vectors was 86.9 % over the full range of speakers across the GPR-VTL plane. This is once again well above the initial performance with MFCC feature vectors, at 65 %, and it is even a little better than the performance with the SSI feature vectors, at 84.6 %. Performance was maximized for a HMM topology consisting of two emitting states, with several Gaussian mixtures making up the output distributions for each emitting state. After optimization of the topology and 12 iterations of the training algorithm, performance rose to 92.3 %, well above the optimal performance with MFCC feature vectors, at 73.5 %, and a little better than performance with the optimal performance with the SSI feature vectors, at 90.7 %. Although the performance of the NAP recognizer took a few more iterations to converge to its maximum than either the MFCC recognizer or the SSI recognizer, performance after 9 iterations was within one percent of its eventual maximum value.

Performance for the individual speakers, obtained using the optimum topology, is shown in Fig. 8. The distribution of performance across the VTL-GPR plane is essentially the same as that achieved with the SSI recognizer; performance is best for spokes one and five, and falls a little at the ends of spokes three and seven where the most extreme values of VTL occur. The sensitivity to VTL change is similar to that with the SSI feature vectors which supports the hypothesis that the deterioration in performance is due to edge effects during the fitting of the Gaussians to the spectral profile. In this initial fit, the production of the feature vectors included DCT spectral smoothing. When the training and testing were rerun with the raw feature vectors (i.e., without spectral smoothing) performance was essentially unaltered, both in average performance, 91.6%, and the performance associated with individual speakers. In summary, the DCT smoothing affects performance with the AIM feature vectors (either NAP or SSI features) by less than 1 %, and so this stage is probably unnecessary. In retrospect, it appears that the Gaussian fitting does all of the smoothing that is necessary.

CONCLUSIONS

In an effort to improve the robustness of ASR recognizers to variation in speaker size, a new form of feature vector was developed, based on the spectral profiles of the NAP and SSI stages of the Auditory Image Model (AIM). The value of the new feature vectors was demonstrated using an HMM syllable recognizer, which was trained on a small number of speakers with similar GPRs and VTLs and then tested on speakers with widely different GPRs and VTLs. Performance was compared to that supported by a traditional ASR system with essentially the same HMM recognizer operating on MFCC feature vectors. When tested on the full range of scaled speakers, performance with the AIM feature vectors (either NAP based or SSI based) was shown to be significantly better (~ 91 %) than that with the MFCC feature vectors (~ 73 %). Moreover, the auditory feature vectors are far smaller (12 components) than the MFCC feature vectors (39 components). The study demonstrates that the high resolution, spectral profiles typical of auditory models can be successfully summarized in low-dimensional feature vectors for use with recognition systems based on standard HMM techniques. The paper includes an analysis of why MFCC features lead to a recognizer that is not robust to variation in speaker size. Basically, the problem is that the individual cepstral coefficients change value in a complicated way with changes in VTL. As a result, children and adults produce clusters in different parts of the recognition space.

Acknowledgements

The research was supported by the UK Medical Research Council (G0500221; G9900369) and by the Air Force Office of Scientific Research, Air Force Material Command, USAF, under grant number FA8655-05-1-3043. The U.S. Government is authorized to reproduce and distribute reprints for Government purpose notwithstanding any copyright notation thereon. The views and conclusions contained herein are those of the author and should not be interpreted as necessarily representing the official policies or endorsements, either expressed or implied, of the Air Force Office of Scientific Research or the U.S. Government.

REFERENCES AND BIBLIOGRAPHY

Allen, J.B. (2005). Articulation and intelligibility. In D. Pisoni, R. Remez (Eds.), Handbook of speech perception. Blackwell: Oxford UK

Cohen, L. (1993). The scale transform. IEEE Trans. ASSP, 41, 3275-3292.

Dempster, A. P., Laird, N. M., Rubin, D. B. (1997). Maximum Likelihood from Incomplete Data via the EM Algorithm, Journal of the Royal Statistical Society. Series B (Methodological), 39, 1-38

Dudley, H. (1939). Remaking speech, Journal of the Acoustical Society of America, 11, 169-177.

Fitch, W.T., Giedd, J. (1999) Morphology and development of the human vocal tract: a study using magnetic resonance imaging. Journal of the Acoustical Society of America, 106, 1511-1522.

Glasberg, B. R., Moore, B. C. J., (1990) Derivation of auditory filter shapes from notched-noise data. Hearing Research 47, 103-138

Irino, T., Patterson, R.D. (2002). Segregating Information about Size and Shape of the Vocal Tract using the Stabilised Wavelet-Mellin Transform, Speech Communication, 36, 181-203.

Kawahara, H., Irino, T. (2005). Underlying principles of a high-quality, speech manipulation system STRAIGHT, and its application to speech segregation. In P. Divenyi (Ed.), Speech separation by humans and machines. Kluwer Academic: Massachusetts, 167-179.

Marcus, S. M. (1981). Acoustic determinants of perceptual center (p-center) location. Perception and Psychophysics, 52, 691-704.

Patterson, R.D., Holdsworth, J. and Allerhand M. (1992). Auditory Models as preprocessors for speech recognition. In: M. E. H. Schouten (Ed.), The Auditory Processing of Speech: From the auditory periphery to words. Mouton de Gruyter, Berlin, 67-83.

Patterson, R.D., Smith, D.R. R., van Dinther, R., and Walters, T. C. (2006). Size Information in the Production and Perception of Communication Sounds. In W. A. Yost, A. N. Popper and R. R. Fay (Eds.), Auditory Perception of Sound Sources. Springer US, 43-75

Patterson, R. D., van Dinther, R. and Irino, T. (2007). “The robustness of bio-acoustic communication and the role of normalization,” Proc. 19th International Congress on Acoustics, Madrid, Sept, ppa-07-011.

Peterson, G.E., Barney, H.L. (1952). Control methods used in a study of the vowels. Journal of the Acoustical Society of America, 24, 175-184. Pressnitzer, R., Patterson, R.D., and Krumbholz, K (2001). The lower limit of melodic pitch. Journal of the Acoustical Society of America, 109, 2074-2084,

Scott, S. K. (1993). P-centres in Speech: An Acoustic Analysis. Doctoral dissertation, University College London.

Stuttle, M.N. and Gales, M.J.F. (2001). A Mixture of Gaussians front end for speech recognition. Eurospeech,

Smith, D.R.R., Patterson, R.D., Turner, R., Kawahara, H., Irino, T. (2005). The processing and perception of size information in speech sounds. Journal of the Acoustical Society of America, 117, 305-318.

Young, S., Evermann, G., Gales, M., Hain, T., Kershaw, D., Liu, X., Moore, G., Odell, J., Ollason, D., Povey, D.,

Valtchev,V., Woodland, P. (2006). The HTK Book (for HTK version 3.4). Cambridge University Engineering Department, Cambridge.