Establishing Norms for the Robustness of Human Speech Recognition

From CNBH Acoustic Scale Wiki

Roy D. Patterson, Nick Fyson, Jessica Monaghan, Thomas C. Walters and Martin D. Vestergaard

The overall purpose of this research project is to develop a practical auditory preprocessor for automatic speech recognition (ASR) that adapts to glottal-pulse rate (GPR) and normalizes for vocal tract length (VTL) without the aid of context and without the need for training. The auditory preprocessor would make ASR much more robust in noisy environments, and more efficient in all environments. The first phase of the research involved constructing a version of the auditory image model (AIM) for use at AFRL/IFEC with documentation illustrating how the user can perform GPR and VTL normalization. The current report describes the development of performance criteria, or ‘norms’, for the robustness of human speech recognition (HSR) – norms that can also be used to compare the robustness of HSR with the lack of robustness in existing commercial recognizers. A companion report describes the development of comparable norms for the robustness of automatic speech recognition (ASR) using traditional ASR techniques, involving Mel Frequency Cepstral Coefficients (MFCCs) as features and Hidden Markov Models (HMMs). The results show that the traditional ASR system is much less robust to changes in vocal tract length than HSR, as was predicted. The ASR test is valuable in its own right inasmuch as it can be run on prototype systems as they are developed to assess their inherent robustness, relative to the traditional ASR recognizer, and to track improvements in robustness as ASR performance converges on HSR performance.

The text and figures on this page are from a project report associated with an EOARD grant (FA8655-05-1-3043) entitled Measuring and Enhancing the Robustness of Automatic Speech Recognition

Roy Patterson , Jessica Monaghan , Tom Walters

Contents |

INTRODUCTION

When a child and an adult say the same word, it is only the message that is the same. The child has a shorter vocal tract and lighter vocal cords, and as a result, the waveform carrying the message is quite different for the child. The fact that we hear the same message shows that the auditory system has some method for ‘normalizing’ speech sounds for both Glottal Pulse Rate (GPR) and Vocal Tract Length (VTL). These normalization mechanisms are crucial for extracting the message from the sound that carries it from speaker to listener. They also appear to extract specific VTL and GPR values from syllable-sized segments of an utterance as it proceeds. In noisy environments with multiple speakers, the brain may well use these streams of GPR and VTL values to track the target speaker and so reduce confusion. Current speech recognition systems use spectrographic preprocessors that preclude time-domain normalization like that in the auditory system, and this could be one of the main reasons why ASR is so much less robust than Human Speech Recognition (HSR) in multi-source environments (MSEs). To wit, Potamianos et al. (1997) have shown that a standard speech-recognition system (hidden Markov model) trained on the speech of adults, has great difficulty understanding the speech of children. This new perspective, in which GPR and VTL processing are viewed as preliminary normalization processes, was made possible by recent developments in time-scale analysis (Cohen, 1993), and the development of an auditory model (Irino and Patterson, 2002) showing how Auditory Frequency Analysis (AFA) and auditory GPR normalization could be combined with VTL normalization to extract the message of the syllable from the carrier sound.

The overall purpose of this research project is to develop a practical auditory preprocessor for ASR systems that performs GPR and VTL normalization without the aid of context and without the need for training. The auditory preprocessor would make ASR much more robust in noisy environments, and more efficient in all environments. The first phase of the research involved constructing a version of AIM for use at AFRL/IFEC with documentation illustrating how the user can perform GPR and VTL normalization. The current report describes the development of performance criteria, or ‘norms,’ for the robustness of human speech recognition (HSR) – norms that can also be used to compare the robustness of HSR with the lack of robustness in existing commercial recognizers. The results show that HSR is singularly robust to variation in VTL. A companion report describes the development of comparable norms for the robustness of automatic speech recognition (ASR) using traditional ASR techniques, involving Mel Frequency Cepstral Coefficients as features (MFCC ) and Hidden Markov Models (HMMs). The remainder of this introduction describes the internal structure of speech sounds and the concept of normalization as it pertains to speech sounds.

The internal structure of speech Sounds

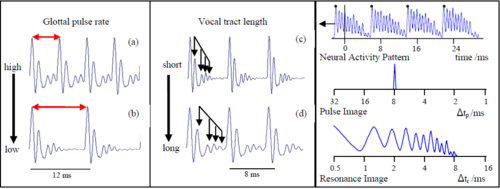

At the heart of each syllable of speech is a vowel; Figure 1 shows four versions of the vowel /a/ as in ‘hard’. From the auditory perspective, a vowel is a ‘pulse-resonance’ sound, that is, a stream of glottal pulses each with a resonance showing how the vocal tract responded to that pulse. Many animal calls are pulse resonance sounds. From the speech perspective, the vowel contains three important components of the information in the larger communication. For the vowels in Fig. 1, the ‘message’ is that the vocal tract is currently in the shape that the brain associates with the phoneme /a/. This message is contained in the shape of the resonance which is the same in every cycle of all four waves. In the left column, one person has spoken two versions of /a/ using high (a) and low (b) GPRs; the pulse rate (PR) determines the pitch of the voice. The resonances are identical since it is the same person speaking the same vowel. In the right-hand column, a small person (c) and a large person (d) have spoken versions of /a/ on the same pitch. The pulse rate and the shape of the resonance are the same, but the rate at which the resonance proceeds within the glottal cycle is slower in the lower panel. This person has the longer vocal tract and so their resonance rings longer. VTL is highly correlated with the height of the speaker (Fitch and Giedd, 1999). In summary, GPR corresponds to the pitch of the voice, the shape of the resonance corresponds to the message, and resonance rate corresponds to VTL and, thus, to speaker size.

Normalization of speech sounds

The essence of GPR and VTL normalization is illustrated in Fig. 2; it shows how the information in the vowel sound, /a/, can be segregated and used to construct a ‘pulse image’ and a ‘resonance image’, each with their own time-difference dimension, Δtp and Δtr. The scale is logarithmic in both cases. The upper panel shows the neural activity produced by the /a/ sound of Fig. 1 in the auditory filter centred on the second formant of the vowel (near 1500 Hz). The largest peaks in the wave are from the glottal pulses; they bound the resonances in time and control the pulse normalization process. It is assumed that when the neural representation of glottal pulse, pn, reaches the brainstem, it initiates a pulse process and a resonance process both of which run until the next glottal pulse arrives. The pulse process simply waits for the next pulse, and when it arrives, calculates the time difference, (Δtp=tn+1-tn) and increments the corresponding bin by the height of the pulse. The pulse, pn+1, terminates the pn process and initiates a new pulse process, and so on. The image decays continuously in time with a half life of about 30 ms, so when the period of the wave is 10 ms or less, the peak in the image is essentially stable because it is incremented three or more times in the time it would take to decay to half its height. This is the essence of Pulse-Rate Normalization (PRN). The resonance process is very similar to the pulse process; however, during the time between pulses, it adds a copy of the resonance behind the current pulse, into the resonance image. So the resonance values in the image are each incremented by the value of the resonance at t – tn. The half life of the resonance image is the same as the pulse image. Now consider the pulse and resonance images that would be produced by the waves in Figure 1. The wave in (a) would produce a stable peak at 6 ms in the pulse image and a stabilized version of the resonance in the resonance image. When the glottal period increases to 12 ms as in (b), the peak at 6 ms in the pulse image would decay away over about 100 ms as a new peak rises at 12 ms. The resonance in the region 0 < Δtr < 6 would not change. When the resonance expands as in (d), the peak in the pulse image at Δtp = 8 ms would be unaffected. The resonance function would shift a little to the left (larger Δtr values) without changing shape. This is the essence of Resonance Scale Normalization (RSN). The resonance shape is the message; the distance from the origin represents the length of the vocal tract. These processes can be applied to all of the channels of AFA to produce the complete analysis of the syllable. In summary, these simple normalization processes segregate the GPR information from the VTL information, and segregate the resonance scale information from the resonance shape information (the message).

METHODS, ASSUMPTIONS AND PROCEDURES

The overall purpose of the current study is to develop a paradigm for research on the robustness of ASR (Allen, 2005) including a means of comparing the robustness of ASR with the robustness of HSR. This section of the report describes the development of norms for the robustness of HSR; the corresponding section of the companion report described the development of norms for the robustness of ASR. The studies of HSR and ASR employ the same set of stimuli – a structured database of simple syllables and the same response measure – percent correct syllable recognition. The main difference between the HSR and ASR studies is in the training and the systems used to perform the recognition, that is, the human brain versus a computer-based HMM recognizer using MFCC features.

Structured, syllable corpus

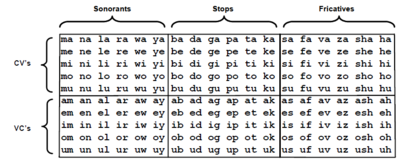

A structured corpus of simple syllables was used to establish both the ASR robustness norms and the HSR robustness norms. The corpus was compiled by Ives et al. (2005) who used phrases of four syllables to investigate VTL discrimination and show that the Just Noticeable Difference (JND) in speaker size is about 5 %. There were 180 syllables in total, composed of 90 pairs of consonant-vowel (CV) and vowel-consonant (VC) syllables, such as ‘ma’ and ‘am’. There were three consonant-vowel (CV) groups and three vowel-consonant (VC) groups as shown in Table I. Within the CV and VC categories, the three groups were distinguished by consonant category: sonorants, stops, or fricatives. So, the corpus is a balanced set of simple syllables in CV-VC pairs, rather than a representative sample of syllables from the English language. The vowels were pronounced as they are in most five vowel languages, like Japanese and Spanish, so that they could be used with a wide range of listeners. A useful mnemonic for the pronunciation is the names of the notes of the diatonic scale, “do, re, mi, fa, so” with “tofu” for the /u/. For the VC syllables involving sonorant consonants (e.g., oy), the two phonemes were pronounced separately rather than as a diphthong.

The syllables were recorded from one speaker (author RP) in a quiet room with a Shure SM58-LCE microphone. The microphone was held approximately 5 cm from the lips to ensure a high signal to noise ratio and to minimize the effect of reverberation. A high-quality PC sound card (Sound Blaster Audigy II, Creative Labs) was used with 16-bit quantization and a sampling frequency of 48 kHz. The syllables were normalized by setting the RMS value in the region of the vowel to a common value so that they were all perceived to have about the same loudness. We also wanted to ensure that, when any combination of the syllables was played in a sequence, they would be perceived to proceed at a regular pace; an irregular sequence of syllables causes an unwanted distraction. Accordingly, the positions of the syllables within their files were adjusted so that their perceptual-centers (P-centers) all occurred at the same time relative to file onset. The algorithm for finding the P-centers was based on procedures described by Marcus (1981) and Scott (1993), and it focuses on vowel onsets. Vowel onset time was taken to be the time at which the syllable first rises to 50 % of its maximum value over the frequency range of 300-3000 Hz. To optimize the estimation of vowel onset time, the syllable was filtered with a gammatone filterbank (Patterson et al., 1992) having thirty channels spaced quasi-logarithmically over the frequency range of 300-3000 Hz. The thirty channels were sorted in descending order based on their maximum output value and the ten highest were selected. The Hilbert envelope was calculated for these ten channels and, for each, the time at which the level first rose to 50 % of the maximum was determined; the vowel onset time was taken to be the mean of these ten time values. The P-centre was determined from the vowel onset time and the duration of the signal as described by Marcus (1981). The P-center adjustment was achieved by the simple expedient of inserting silence before and/or after the sound. After P-center correction the length of each syllable, including the silence, was 683 ms.

Scaling the syllable corpus

Once the syllable recordings were edited and standardized, a vocoder referred to as STRAIGHT (Kawahara and Irino, 2005) was used to generate all the different ‘speakers’, that is, versions of the corpus in which each syllable was transformed to have a specific combination of VTL and GPR. STRAIGHT uses the classical source-filter theory of speech Dudley (1939) to segregate GPR information from the spectral-envelope information associated with the shape and length of the vocal tract. STRAIGHT produces a pitch-independent spectral envelope that accurately tracks the motion of the vocal tract throughout the syllable. Subsequently, the syllable can be resynthesized with arbitrary changes in GPR and VTL; so for example, the syllable of a man can be readily transformed to sound like a woman or a child. The vocal characteristics of the original speaker, other than GPR and VTL, are preserved by STRAIGHT in the scaled syllable. Syllables can also be scaled well beyond the normal range of GPR and VTL values encountered in everyday speech and still be recognizable (e.g., Smith et al., 2005).

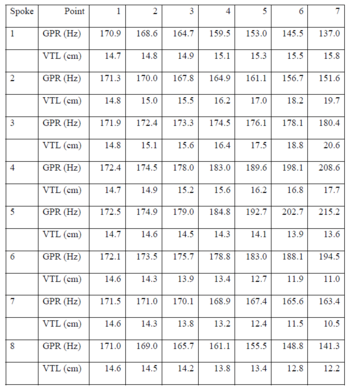

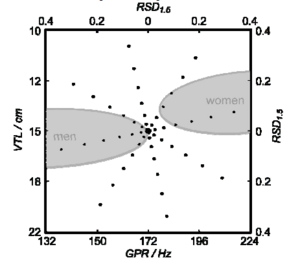

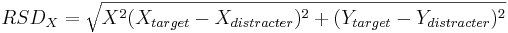

The reference speaker in both the HSR and ASR studies was assigned GPR and VTL values on the line between the average adult man and adult woman on the GPR-VTL plane, and mid-way between the adult man and woman, where both the GPR and VTL dimensions are logarithmic. Peterson and Barney (1952) reported that the average GPR of men is 132 Hz, while that of women is 223 Hz, and Fitch and Giedd (1999) reported that the average VTLs of men and women were 155.4 and 138.8 mm, respectively. Accordingly, the reference speaker was assigned a GPR of 171.7 Hz and a VTL of 146.9 mm. For scaling purposes, the VTL of the original speaker was taken to be 165 mm. A set of 56 scaled speakers were produced with STRAIGHT in the region of the GPR-VTL plane surrounding the reference speaker, and each speaker had one of the combinations of GPR and VTL illustrated by the points on the radial lines of the GPR-VTL plane in Fig. 3. There are seven speakers on each of eight spokes. The ends of the radial lines form an ellipse whose minor radius is four semi-tones in the GPR direction and whose major radius is six semi-tones in the VTL dimension; that is, the GPR radius is 26 % of the GPR of the central speaker (4 semi-tones = = 26 %), and the VTL radius is 41% of the VTL of the training speaker (6 semi-tones = = 41 %). The step size in the VTL dimension was deliberately chosen to be 1.5 times the step size in the GPR direction because the JND for speaker size is larger than that for pitch. The seven speakers along each spoke are spaced logarithmically in this log-log, GPR-VTL plane. The spoke pattern was rotated anti-clockwise by 12.4 degrees so that there was always variation in both GPR and VTL when the speaker changes. This angle was chosen so that two of the spokes form a line coincident with the line that joins the average man with the average woman in the GPR-VTL plane. The GPR-VTL combinations of the 56 different scaled speakers are presented in Table 2. The important variable in both the ASR and the HSR experiments is the distance along a spoke from the reference voice at the center of the spoke pattern to a given test speaker. The distance is referred to as the Radial Scale Distance (RSD). It is the geometrical distance from the reference voice to the test voice, which is

where X and Y are the coordinates of GPR and VTL, respectively, in this log-log-space, and is the GPR-VTL trading value, 1.5. The speaker values were positioned along each spoke in logarithmically increasing steps on these logarithmic co-ordinates. The seven RSD values were 0.0071, 0.0283, 0.0637, 0.1132, 0.1768, 0.2546, and 0.3466. There are eight speakers associated with each RSD value, and their coordinates form ellipses in the logGPR-logVTL plane. The seven ellipses are numbered from one to seven, beginning with the innermost ellipse, with an RSD value of 0.0071.

In the ASR study, the HMM recognizer was trained on the data from one of three different ellipses: numbers one, four and seven with RSD values of 0.0071, 0.1132, and 0.3466, respectively. In each case, the recogniser was tested on the remaining speakers. The HMM recogniser was initially trained on the reference speaker and the voices of ellipse number one, that is, the eight speakers closest to the reference speaker in the GPR-VTL plane. This initial experiment was intended to imitate the training of a standard, speaker-specific ASR system, which is trained on a number of utterances from a single speaker. The eight adjacent points provided the small degree of variability needed to produce a stable hidden Markov model for each syllable. The recogniser was then tested on all of the scaled speakers, excluding those used in training, to provide an indication of the native robustness of the ASR system. The elliptical distribution of speakers, across the GPR-VTL plane, was chosen to reveal how performance falls off as GPR and VTL diverged from those of the reference speaker. Statistical learning machines do not generalize well beyond their training data. Thus, it was not surprising to find that the performance of the speaker-dependent recogniser was not robust; performance falls off rapidly as VTL diverges from that of the reference speaker. Statistical learning machines are rather better at interpolating, rather than extrapolating, and so the recogniser was retrained, first with the speakers on ellipse four, and then with the speakers on ellipse seven, to see if giving the system experience with a larger range of speakers would enable it to interpolate within the ellipse and so improve performance. The eight points in ellipses four and seven were used as the training sets in these two experiments. The results are presented in the next section. In the HSR study, pilot testing made it clear that performance would be good throughout the GPR-VTL plane, and as a result, the listeners were only trained with the central, reference speaker and then tested with the speakers on ellipse seven with the RSD value, 0.3466. These are the speakers at the ends of the spokes with GPR and VTL values most different from the reference speaker. A comparison of the ASR and HSR results is presented in the final section of the Results and Discussion section below.

Training the listeners to use the syllable database and the graphical user interface

Human listeners understand speech and have no need of training to demonstrate that they are consummate speech recognizers. However, in order to compare the performance of the human listeners with the performance of an ASR system, it was necessary to teach listeners to identify the syllables they hear as speech sounds using visual labels presented in a graphical user interface (GUI). In an ASR system, the correspondence is established by the label files provided as basic resources to the recognizer. There are two components to the training problem: one is to learn the location of each syllable symbol in the GUI, and the other is to learn the specific pronunciation of the syllables; for example, “ti” is to be pronounced as in the word “tea” rather than as in the word “tie”. It is important that the listeners learn both the location and the pronunciation of the syllables prior to the gathering of the robustness norms, so that errors made while learning the location and pronunciation information are not interpreted as lack of robustness when analysing the robustness data. It is also important that they learn the location and pronunciation of the syllables in a neutral environment which directs their attention away from variation in GPR and VTL, and so does not provide robustness training. The training of individual listeners began with a hearing test (audiogram) in which absolute threshold for the audiometric frequencies, 0.5, 2.0 and 4.0 kHz, was measured using a two-alternative, forced-choice procedure (Green and Swets, 1966) and a three-down, one-up tracking rule (Levitt, 1971). All of the listeners were found to have audiometrically normal hearing, that is, within 15 dB of absolute threshold at the three frequencies. This audiogram test also introduced the listeners to the sound attenuating booths, the computer display and the computer control system for the experiments.

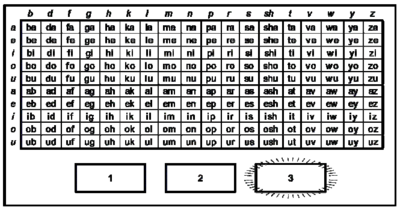

The pronunciation of the syllables

The GUI for the experiment was based on Table I; however, to assist the listeners in locating the syllable labels, they were reordered within rows, so that the consonants occurred in alphabetical order, as illustrated in the image of the GUI presented in Fig. 4. Once the listener had identified the graphemic form of the syllable mentally, they could then address the table by column (consonant) and row (vowel) to find the required syllable label. The task is not difficult but listeners take some time to get up to speed on the task, and during this time they make occasional motor or attention errors. The training was intended to ensure that the effect of these errors on the robustness norms was minimized. The syllable database includes 180 syllables of the English language. Each of the syllables has one of the five cardinal, sustained vowels that are common to most of the worlds’ languages, so the test can be used with non-English listeners should the need arise. The phonetic symbols for the vowels are /a:/, /e:/, /i:/, /o:/, and /u:/ as in the words ‘rot’, ‘may’, ‘pea’, ‘bureau’, and proof,’ respectively. In this extended example, the vowel as it appears in the visual form of the word is different from the vowel in the phonetic label (e.g., /a:/) in every case; the second vowel sound in ‘bureau’ is /o:/. This is a simple demonstration of the fact that the correspondence between phonemes and alphabetical symbols in English is inconsistent and extremely complex. This is due, on the one hand, to the vowel shift that took place in English in the middle ages, and on the other hand, to the fact that English has roots in both the Teutonic and Romantic language families. Listeners could be taught to use the IPA transcription of the syllables, but it is very complicated symbol set, and it is not really necessary for this relatively simple database. The note names for the diatonic musical scale – ‘do’, ‘re’, ‘mi’, ‘fa’, ‘so’, ‘la’, ‘ti’, ‘do’ – are the same in English as in all other European languages, and four of the five cardinal vowels appear in the list – /a:/, /e:/, /i:/, and /o:/. So we used the note names of the diatonic scale to explain the pronunciation convention for the experiment; the note names were also included on the GUI in the first column under the vowel symbols. We were not able to find a single simple syllable to provide the correct pronunciation for /u:/, and so we co-opted the word ‘tofu’ which has the correct pronunciation and is memorable once the correspondence is pointed out.

The location of the syllable labels

The initial training on the use of the GUI and the syllable database was designed to draw the listener’s attention to the phonetic organization of the syllables in the GUI and the method of response. The training was structured as a series of 15 short recognition tests (RTs) that increased in difficulty as the training proceeded; a criterion level of performance had to be achieved to progress from one test to the next. The training began with two easy conditions in which the listener was told the vowel and only had to identify the consonant in the syllable. These conditions help the listener learn to read the table and understand its structure and the experimental paradigm.

RT1: CV syllables, with fixed vowel and variable consonant: 10 trials, 80 % correct. On each trial, one row of the upper half of the syllable table was highlighted, indicating the vowel of the syllable in the third interval of that trial. The listener had to identify the consonant in the syllable by choosing a syllable in the highlighted row of the table.

RT2: VC syllables, with fixed vowel and variable consonant: 10 trials, 80 % correct. On each trial, one row of the lower half of the syllable table was highlighted, indicating the vowel of the syllable in the third interval of that trial. The listener had to identify the consonant in the syllable by choosing a syllable in the highlighted row of the table. The training continued with four more easy conditions in which the listener was told the consonant and only had to identify the vowel in the syllable. These conditions draw attention to the vertical structure of the syllable table.

RT3: CV syllables, with fixed consonant and variable vowel: 10 trials, 80 % correct. On each trial, one column of the upper half of the syllable table was highlighted, indicating the consonant of the syllable in the third interval. The listener had to identify the vowel in the syllable by choosing a syllable in the highlighted column of the table. The test was limited to one category of consonants, plosives, stops or sonorants, and the category was chosen at random for each listener.

RT4: VC syllables, with fixed consonant and variable vowel: 10 trials, 80 % correct. On each trial, one column of the lower half of the syllable table was highlighted, indicating the consonant of the syllable in the third interval. The listener had to identify the vowel in the syllable by choosing a syllable in the highlighted column of the table. The test was limited to one category of consonants, plosives, stops or sonorants, and the category was chosen at random for each listener.

RT5: CV syllables, with fixed consonant and variable vowel: 10 trials, 80 % correct. This condition was like RT3, except that the response set was not limited to a single category of consonants; all consonants were equally likely on each trial.

RT6: VC syllables, with fixed consonant and variable vowel: 10 trials, 80 % correct. This condition was like RT4, except that the response set was not limited to a single category of consonants; all consonants were equally likely on each trial. The training continued with two conditions in which the response set was gradually expanded; the consonant was restricted to one of the three categories – plosives, stops or sonorants – but it was randomized within category. The pass mark was reduced by 10% to accommodate the increased perplexity of the test.

RT7: CV syllables, with fixed consonant category and variable vowel: 10 trials, 70 % correct. This condition was like RT5, except that the response set was expanded to include one complete consonant category within the CV syllables.

RT8: VC syllables, with fixed consonant category and variable vowel: 10 trials, 70 % correct. This condition was like RT6, except that the response set was expanded to include one complete consonant category within the VC syllables. The training continued with two conditions in which the response set was expanded to include all of the syllables within the top or bottom halves of the table.

RT9: CV syllables chosen at random from the full set: 10 trials, 70 % correct.

RT10: VC syllables chosen at random from the full set: 10 trials, 70 % correct. Finally there were five identical tests where the response set was the entire table of syllables.

RT11: all syllables chosen at random from the full set: 10 trials, 70 % correct.

RT12, 13, 14, 15: all syllables chosen at random from the full set: 20 trials, 70 % correct.

The robustness test for human listeners

From the point of view of the listeners, the robustness test was a simple extension of the training experiment: At the start of the session, the last condition of the training experiment was repeated with the reference speaker used for the training session, that is, the speaker at the center of the GPR-VTL plane in Figure 3 with a pitch of 172 Hz and a VTL of 15 cm. The condition included 20 trials with the test syllable on each trial chosen from the full set of 180 CVs and VCs. Then the listener was presented with 16 conditions that had the same form and the same response set, but the speakers were the eight who were most different from the training speaker; that is, the eight speakers with RSD values of 0.3466 who form the largest ellipse in the GPR-VTL plane of Figure 3. Each of these eight speakers was presented twice, and there were two conditions with the training speaker, so the robustness test consisted of 18 speaker-conditions presented in a random sequence. The test was performed by eight listeners all of whom had audiometrically normal hearing. Two of the listeners were lab members; the remaining six were paid at an hourly rate. They were instructed in the labs ethical procedures and all signed informed consent forms at the end of the introduction

RESULTS AND METHODS

Results of the HSR experiment

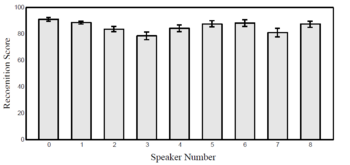

The results of the robustness test are presented in Fig. 5 which shows the average performance for each speaker, averaged over all listeners and both presentations of the speaker. The speakers are designated by their spoke numbers, which are labelled 1-8 in counter-clockwise order beginning with the spoke that extends from the reference speaker to the large, normal man. In all cases, performance is between 80 and 90 percent correct. Performance is nominally best for the reference speaker, but the level is essentially the same as for the speakers at the ends of spokes 1, 5, 6, and 8. Performance is slightly depressed for the speakers at the end of spokes 3, and 7, and these speakers are, arguably, the most novel in terms of their combinations of GPR and VTL values. An analysis of variance showed that there was, indeed, a significant effect of speaker (F8 = 2.93, p<0.007). However, an examination of individual paired comparisons showed that the main effect of speaker is almost entirely due to the comparison between the reference speaker (0) and speaker 3; the comparison between speaker 0 and speaker 7 was not significant. In summary, the results show that HSR is very robust. There was very little decrement in performance when the reference speaker was scaled to represent speakers covering the entire human range and beyond, and this was true despite the fact that the listeners were given no exposure whatsoever to speakers with differing GPR and VTL before the start of the robustness test.

Comparison of the HSR and ASR robustness results

The robustness of HSR to variability in VTL stands in marked contrast to the lack of robustness for the speaker-specific ASR system trained on the reference speaker and speakers on the ellipse with the smallest RSD, 0.0071. The human listeners had previous experience with speakers whose GPR and VTL values vary from that of the reference speaker in the direction along spoke 1 where there are speakers similar to men of various sizes, and in the direction of the wedge between spokes 5 and 6 where there are speakers similar to women and children in the normal population. So it is not surprising that they performed well with these speakers. However, speakers with combinations of GPR and VTL like those in the wedges between spokes 6 and 8, and between spokes 2 and 4 become novel rapidly as RSD increases, and human performance was just as good with almost all of these speakers. The data support the view of Irino and Patterson (2002) that the auditory system has mechanisms that adapt to the GPR of the voice and normalize for acoustic scale prior to the commencement of speech recognition. Speech scientists are not surprised by listeners’ ability to understand speakers with unusual combinations of GPR and VTL, presumably because they have considerable experience of their own normalization mechanisms. The interesting point in this regard is that they often over generalize when it comes to statistical learning machines and predict that they too will be able to generalize and readily understand speakers with unusual combinations of GPR and VTL. The results from the ASR study show that there is a marked asymmetry in the performance of HMM/MFCC recognizers between extrapolation and interpolation. When the recognizer was trained on speakers with only a small range of GPR and VTL combinations, it had great difficulty extrapolating to speakers with different VTLs. This result is important because this is precisely the way most commercial recognizers are trained, that is, on the voice of a single person. If the person is an adult member, the recognizer is very unlikely to work for a child, and vice verse. Fortunately, the study suggests a solution to the VTL aspect of the robustness problem: When the recognizer was trained on speakers with a wide range of combinations of GPR and VTL, its robustness improved dramatically. This suggests that the speech sounds used to train a machine recognizer should be scaled with STRAIGHT, or some other pitch-synchronous scaling algorithm, to simulate speakers with a wide range of combinations of GPR and VTL.

CONCLUSION

A syllable recognition task was developed to establish norms for the robustness of HSR and ASR – norms that could be used to monitor the robustness of an ASR system during development and track its improvement towards the performance that might be expected from human listeners. HSR performance on the syllable task was found to be highly robust to variation in both GPR and VTL. The performance of a standard HMM/MFCC recognizer on the syllable task was not robust to variation in VTL when it was trained on a group of speakers having only a small range of VTLs. When it was trained on a group of speakers having a wide range of VTLs, robustness improved dramatically. The results suggests that the speech sounds used to train a machine recognizer should be scaled with STRAIGHT, or some other pitch-synchronous scaling algorithm, before training so that the training set simulates speakers with a wide range of combinations of GPR and VTL.

Acknowledgements

The research was supported by the UK Medical Research Council (G0500221; G9900369) and by the Air Force Office of Scientific Research, Air Force Material Command, USAF, under grant number FA8655-05-1-3043. The U.S. Government is authorized to reproduce and distribute reprints for Government purpose notwithstanding any copyright notation thereon. The views and conclusions contained herein are those of the author and should not be interpreted as necessarily representing the official policies or endorsements, either expressed or implied, of the Air Force Office of Scientific Research or the U.S. Government.

References

Allen, J.B. (2005). Articulation and intelligibility. In D. Pisoni, R. Remez (Eds.), Handbook of speech perception. Blackwell: Oxford UK

Cohen, L. (1993). The scale transform. IEEE Trans. ASSP, 41, 3275-3292.

Dudley, H. (1939). Remaking speech, Journal of the Acoustical Society of America, 11, 169-177.

Fitch, W.T., Giedd, J. (1999) Morphology and development of the human vocal tract: a study using magnetic resonance imaging. Journal of the Acoustical Society of America, 106, 1511-1522.

Green, D. M., Swets, J. A. (1966). Signal detection theory and psychophysics. Wiley: New York

Irino, T., Patterson, R.D. (2002). Segregating Information about Size and Shape of the Vocal Tract using the Stabilised Wavelet-Mellin Transform, Speech Communication, 36, 181-203.

Ives, D.T., Patterson, R.D. (2004). Size discrimination in CV and VC English syllables, British Society of Audiology, London P63.

Kawahara, H., Irino, T. (2005). Underlying principles of a high-quality, speech manipulation system STRAIGHT, and its application to speech segregation. In P. Divenyi (Ed.), Speech separation by humans and machines. Kluwer Academic: Massachusetts, 167-179.

Levitt, H. (2007). Transformed up-down methods in psychoacoustics, Journal of the Acoustical Society of America, 49,467–477.

Marcus, S. M. (1981). Acoustic determinants of perceptual center (p-center) location. Perception and Psychophysics, 52, 691-704.

Patterson, R.D., Holdsworth, J. and Allerhand M. (1992). Auditory Models as preprocessors for speech recognition. In: M. E. H.

Schouten (ed), The Auditory Processing of Speech: From the auditory periphery to words. Mouton de Gruyter, Berlin, 67-83.

Peterson, G.E., Barney, H.L. (1952). Control methods used in a study of the vowels. Journal of the Acoustical Society of America, 24, 175-184.

Scott, S. K. (1993). P-centres in Speech: An Acoustic Analysis. Doctoral dissertation, University College London.

Smith, D.R.R., Patterson, R.D., Turner, R., Kawahara, H., Irino, T. (2005). The processing and perception of size information in speech sounds. Journal of the Acoustical Society of America, 117, 305-318.

List of symbols, abbreviations and acronyms

AFA - Auditory Frequency Analysis

ASR - Automatic Speech Recognition

CV - Consonant-vowel

DCT - Discrete Cosine Transform

GPR - Glottal Pulse Rate

GUI - Graphical User Interface

HMMs - Hidden Markov Models

HTK - HMM Toolkit

HSR - Human Speech Recognition

JND - Just-noticeable difference

MFCCs - Mel-frequency Cepstral Coefficients

MSEs - Multi-source Environments

P-center - Perceptual Center

PR - Pulse Rate

PRN - Pulse Rate Normalization

RSD - Radial Scale Distance

RSN - Resonance Scale Normalization

RTs - Recognition Tests

VC - Vowel-consonant

VTL - Vocal Tract Length