Auditory Images, Auditory Figures and Auditory Events

From CNBH Acoustic Scale Wiki

Auditory perceptions are assembled in the brain from sounds entering the ear canal, in conjunction with knowledge of the current context and information from memory. It is not possible to record or measure perceptions directly, so any description of our perceptions must involve an explicit, or implicit, model of how perceptions are produced by a sensory system in conjunction with the brain. This chapter focuses on the initial auditory image that the system constructs when presented with a sound, and a computational version of this Auditory Image Model (AIM) that illustrates the space of auditory perception and the how auditory figures and events might appear in that space.

Contents |

The Auditory Image Model of Auditory Perception

To account for everyday experience, it is assumed that sensory organs, and the neural mechanisms that process sensory data, together construct internal, mental models of objects as they occur in the world around us. That is, the visual system constructs the visual part of the object from the light the object reflects and the auditory system constructs the auditory part of the object from the sound the object emits, and these two aspects of the mental object are combined with any tactile and/or olfactory information, to produce our experience of an external object and any event associated with it. The task of the auditory neuroscientist is to characterize the auditory part of perceptual modelling. This chapter sets out some of the terms and assumptions used to discuss the internal space of auditory perception and the auditory images, auditory figures and auditory events that arise in that space in response to communication sounds. The phrase "auditory image model" is used to refer both to the conceptual model of what takes place in the brain and the computational version of the conceptual model which is used to illustrate how auditory images, figures and events might be represented in the brain, and so enable us to see what we hear.

We assume that the sub-cortical auditory system creates a perceptual space, in which an initial auditory image of a sound is assembled by the cochlea and mid-brain using largely data-driven processes. The auditory image and the space it occupies are analogous to the visual image and the space that appears when you open your eyes after being asleep. If the sound arriving at the ears is a noise, the auditory image is filled with activity, but it lacks organization, the fine-structure is continually fluctuating, and the space is not well defined. If the sound has a pulse-resonance form, an auditory figure appears in the auditory image with an elaborate structure that reflects the phase-locked neural firing pattern produced by the sound in the cochlea. Extended segments of sound, like syllables or musical notes, cause auditory figures to emerge, evolve, and decay in what might be referred to as auditory events, and these events characterize the acoustic output of the external source. All of the processing up to the level of auditory figures and events can proceed without the need of the top-down processing associated with context or attention. For example, if we are presented with the call of an animal that we have never encountered before, the early stages of auditory processing will produce an auditory experience with the form of an event, even though we, as listeners, might be puzzled about how to interpret the event. It is also assumed that auditory figures and events are produced in response to sounds when we are asleep, and that it is a subsequent, more central process that evaluates the events and decides whether wake in response to the sound.

When we are alert, the brain may interpret a auditory event in conjunction with events occurring in other sensory modalities at the same time, and in conjunction with contextual information and memories, and so assign meaning to a sequence of perceptual events. If the experience is largely auditory, as with a melody, then the event with its meaning might be regarded as an auditory object, that is, the auditory part of the perceptual model of the external object that was the source of the sound. A detailed discussion of this view of hearing and perception is described in An introduction to auditory objects, events, figures, images and scenes . Examples of the main concepts are presented in the remainder of this introduction.

With these assumptions and concepts in mind, we can now turn to the internal representation of sound and how the auditory system might construct the representation that forms the basis of our perception of that sound.

Auditory Images

The components of everyday sounds fall naturally into three broad categories: tones, like the hoot of an owl or the toot of a horn; noises, like wind in the trees or the roar of a jet engine; and transients, like the crack of a breaking branch, the clip/clop of horses hooves, or the clunk of a car door closing. The initial perceptions associated with the mental images aroused by these examples are what is meant by the term "auditory image". The auditory image is the first internal representation of a sound of which we can be aware. We cannot direct our brain to determine whether the eardrum is vibrating at 200 Hz, or whether the point on the basilar membrane that responds specifically to acoustic energy at 200 Hz is currently active, or whether neurons in sub-cortical nuclei of the auditory pathway that are normally involved in processing activity from the 200-Hz part of the basilar membrane are currently active. What we experience is the end product of a sequence of physiological processes that deals with all of the acoustic energy arriving at the ears in the recent past. The summary representation of recent acoustic input of which we are first aware is the auditory image. This summary image is also presumed to be the basis of all subsequent perceptual processing. There are no other auditory channels for secondary acoustic information that might be folded into our experience of the sound at a later point. The auditory image is the only auditory input to the central auditory processor that interprets incoming information in the light of the current context and elements of auditory memory.

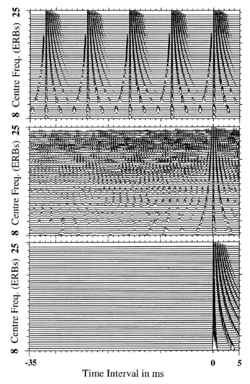

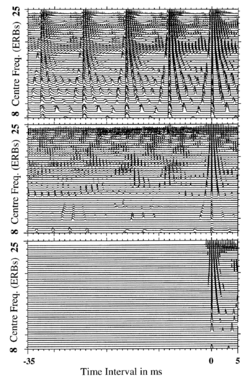

The three panels of Figure 2.1.1 present simulated auditory images of a regular click train with an 8-ms period (a), a white noise (b), and an isolated acoustic pulse(c), and each is, arguably, the simplest broadband sound in its respective category (tone, noise, or transient). The click train sounds like a buzzy tone with a pitch of 125 Hz. It generates an auditory image with a sharply-defined vertical structure that repeats at regular intervals across the image. Moreover, the temporal fine-structure within the structure is highly regular. These are the characteristics of communication tones as they appear in the simulated auditory image. The white noise produces a "sshhh" perception. It generates activity throughout the auditory image but there are no stable structures and there is no repetition of the occasional transient feature that does arise in the activity. Moreover, the micro-structure of the noise image is highly irregular, except near the 0-ms vertical towards the right-hand edge of the image. These are the characteristics of noisy sounds in the auditory image. The acoustic pulse produces a perceptual "click." It generates an auditory image that is blank except for one well-defined structure attached to the 0-ms vertical at the right-hand side of the image. In summary, the attributes illustrated in the three panels of Figure 2.1.1 are the basic, distinguishing characteristics of the auditory images of tones, noises and transients.

Many of the auditory images we experience in everyday life are fairly simple combinations of tones, noises and transients. For example, from the auditory perspective, the syllables of speech are communication tones (referred to as vowels) that are made more distinctive by attaching speech noises (referred to as fricative consonants), speech transients (referred to as plosive consonants), and mini-tones (referred to as sonorant consonants). The three panels of Figure 2.1.2 present simulated auditory images of the speech tone /ae/, the speech noise /s/ and the speech transient /k/ (letters set off by slashes indicate the sound, or phoneme, associated with the letter, or combination of letters). They are the auditory images for the phonemes of the word "ask". The banding of the activity in the image of the vowel (Figure 2.1.2a) makes it distinguishable from the uniform activity in the image of the click train (Figure 2.1.1a), and these differences play an important part in distinguishing the /ae/ as a speech sound. Nevertheless, the auditory images of the click train and the vowel are similar insofar as both contain a structure that repeats across the width of the auditor image. The structure is distinctive and, in this example, the spacing of the structures is the same for the two images. Similarly, the distribution of activity for the fricative consonant, /s/ (Figure 2.1.2b), is restricted with respect to the activity of the white noise (Figure 2.1.b), but the images are similar insofar as there are no stable structures in the image, the fine-structure is irregular, and the activity spans the full width of the image. Finally, the distribution of activity for the plosive consonant, /k/ (Figure 2.1.2c), is restricted with respect to the activity of the acoustic pulse (Figure 2.1.c), but the images are similar insofar as they are largely empty and the activity that does appear is attached to the 0-ms vertical of the image and has regular fine-structure.

Music is also largely composed of tones, noises and transients. Musical instruments from the brass, string and woodwind families produce sustained communication tones, and sequences of these tones are used to convey the melody information of music. The auditory images of the individual notes bear an obvious relationship to the auditory images of vowels and click trains. For example, the auditory image of a note from the mid-range of a bassoon is like a cross between the images produced by the click train and the vowel of “mask” insofar as the vertical distribution of activity in the bassoon image is more extensive than that in the vowel image but less extensive than that of the click train image. A segment of a rapid role on a snare drum is a musical noise, whose auditory image is similar to that of the white noise. A single tap on the percussionist’s wood block is a good example of a musical transient. It produces a slightly rounded version of the transient structure at the 0-ms time interval.

This Part of the book explains how auditory images might be constructed by the auditory system and why the three different classes of sounds produce such different auditory images. The implication is that auditory image construction evolved to automatically segregate sounds from the three main categories that occur in the environment, and they produce a representation suitable for analysis by the auditory part of the brain.

Auditory Figures

The static structures that communication tones produce in the auditory image are referred to as auditory figures because they are distinctive and they stand out like figures against the fluctuating activity of noise which appears like an undistinguished background in the auditory image. The click train and the vowel in ‘mask’ are good examples of communication tones that produce prominent perceptions with strong pitches and distinctive timbres. The auditory figures that dominate the simulated auditory images of these sounds (Figures 2.1.1a and 2.1.2a) are elaborate structures, and their similarities and differences provide a basis for understanding the traditional attributes of pitch and sound quality. The auditory images in Figures 2.1.1a and 2.1.2a are similar inasmuch as they are both composed of an auditory figure that repeats at regular intervals across the image, and the interval is roughly the same for the two sounds. The presence of pattern on a large scale in the auditory image is characteristic of tonal sounds. The horizontal spacing of the repeating figure that forms the pattern is the period of the sound and it corresponds (inversely) to the pitch that we percieve; the buzzy tone and the vowel have nearly the same figure spacing and nearly the same pitch. When two or more tonal sounds are played together, the patterns of repeating auditory figures interact to produce compound patterns that explain the basics of harmony in music, that is, the preference for the musical intervals known as octaves, fifths and thirds. In this model, then, musical consonance is determined primarily by properties of peripheral auditory processing and only secondarily by cultural preferences.

The auditory images of the click train and vowel are different inasmuch as the auditory figure of the vowel has a more complex shape and it has a rougher texture. The shape and texture of the simulated auditory image capture much of the character of the auditory figure that we hear; that is, the sound quality or timbre of the sound. The banding of the activity in the vowel figure is largely determined by the centre frequencies and bandwidths of resonances in the vocal tract of the speaker at the moment of speaking. In the absence of these vocal resonances, a stream of glottal pulses generates an auditory figure much like those produced by a click train, only somewhat more rounded at the top and bottom. The resonances are referred to as ‘formants’ in speech research and the shape that they collectively impart to the figure identifies the vowel in large part. The texture of the auditory figure is primarily determined by the degree of periodicity of the source in the individual channels of the image. The texture of the formants in the auditory figure -- the degree of definition in the simulated image -- provides information concerning whether the speaker has a breathy voice, whether the consonants in the syllable are voiced, and whether the syllable is stressed or not.

Transient sounds also produce auditory figures. They do not last long and the auditory system often misses the details of the shape and texture when an isolated transient occurs without warning. But if a transient is repeated, as when a horse walks slowly down a cobbled street, the individual shoe figures become sufficiently distinct to tell us, for example, if one of the shoes is loose or missing.

Noises do not produce auditory figures. The auditory images of noises have a rough texture and there are no stable patterns. A noise may be not entirely random, either in the temporal or the spectral dimension, and still be a noise. In such cases, we hear that the noise is different from a continuous white noise; for example, a noise may have more hiss than 'sssshhh' indicating the presence of relatively more high-frequency energy than low-frequency energy; or it may have temporal instabilities that we hear as rasping, whooshing, or motor-boating. But the noise does not form auditory figures in the sense of stable structures with a definite timbre, and the fine-structure of the noise is always rough and constantly changing.

Auditory Events

Successive snapshots of the activity in the auditory image can be used to produce animated cartoons that track changes in a sound as they occur. These animated cartoons allow us to simulate the internal representation of an auditory event, that is, the dynamic, real-time, internal representation of an acoustic event occurring in the environment. Isolated vowels and musical notes are dominated by tones that produce simple auditory events with a beginning, a middle and an end. The cartoons of these auditory events can be slowed down so that we can observe how the auditory figures of communication sounds emerge, evolve, and dissolve in time as a sound proceeds. In these simple events, the auditory figure associated with the repeating pattern in the NAP, rises out of the floor of the auditory image in position as the sound comes on; it does not rush in from the side in a blur. As the tone proceeds, the auditory figure remains fixed in position for as long as the tone is sustained. Then, when the tone goes off, the figure decays back into the floor of the auditory image without changing position or form, unless the acoustic properties of the sound change. Isolated tones are interesting inasmuch as they illustrate the basic dynamic properties of auditory perception; the duration over which the loudness of perception increases to its sustained level and the duration over which it decreases back to silence after the sound has gone off.

Simple auditory events are also interesting from the larger perspective, because they are the building blocks of compound auditory events like melodies, words and phrases. The animated cartoon of a click-train melody illustrates the compound auditory event produced by a seven note melody -- do, re, mi, so, mi, re, do. The 'instrument' in this case is a click train so the auditory figure is just the impulse response of the filterbank. The cartoon shows how the set of auditory figures produced by the individual tones bounces back and forth in discrete steps as the melody proceeds. In this example, it is only the pitch that is changing, and as a result, the motion in the image is predominantly horizontal. This is the characteristic of the perceptual property, pitch; it determines the position of the set of auditory figures in the auditory image. Scanning slowly through the frames of the cartoon shows how the simple auditory events corresponding to the individual tones, appear and disappear in position as the compound event proceeds. It shows that the operation of the auditory preprocessor is very fast with respect to our perception of the melody.

A Click Train Melody as an auditory event Loading the player... Download Arpeggio.mov [4.17 MB] |

Next consider the auditory event produced by the word ‘leo’, simulated by the "leo" cartoon below. Nominally, leo is composed of three phonemes, /l/, /i/ and /o/, and the cannonical auditory figures associated with these phonemes appear briefly at the start, middle and end of the event. But the visual event produced by the cartoon is dominated by the motion produced by the transition from the first phoneme, /l/, to the second, /i/, and the motion produced by the transition from the second phoneme, /i/, to the final phoneme, /o/. When you listen to the auditory event, you can hear the transitions (especially if you are watching the cartoon), but the perception is dominated by the phonemes and you hear the word with its meaning. This is an example of the influence of learning and context in perception.

In this example, the word is spoken in a monotone voice (that is, on a fixed pitch), and so the event is dominated by the vertical motion of the formants as they move from the positions that define /l/ to those that define /i/ and then on to the positions that define /o/. In the word "leo," the second formant of the auditory figure is observed to rise rapidly at the onset of the word into the high position associated the /i/ vowel and then drop back into the lower position associated with the second formant of the final /o/ vowel. Vertical motion of auditory figure components is one of the defining characteristics of speech.

The word leo as an auditory event Loading the player... Download Leo.mov [1.41 MB] |

The videos of auditory events illustrate one of the most important properties of AIM as a model of auditory perception: change in the simulated auditory image occurs in synchrony with change in our perception of the sound. That is, any change in the position of the auditory figure, or any change in the shape of the figure, occurs in synchrony with the changes we hear in the sound. High-resolution, real-time displays of dynamic auditory events cannot be created from the traditional representation of sound, the spectrogram. The temporal fine structure that defines the auditory figure and its texture are integrated out during the production of the spectrogram. The chapters in Part 2 of the book explain how the temporal integration mechanism in AIM converts temporal information on the physiological time scale (milliseconds and hundreds of microseconds) into position information that appears as the shape, texture and pattern of auditory figures as they evolve in the auditory image. The integration mechanism preserves temporal information on the psychological time scale (tens of milliseconds and hundreds of milliseconds) and synchronizes the motion in the image to our experience of the change in the sound over time.

Computational Simulation of Auditory Preprocessing

A computational version of AIM has been developed to illustrate the concepts of the theoretical version of AIM, and to bring them to life in pictures and cartoons. Sound is dynamic, by definition, and cartoons are worth many thousands of words when it comes to describing auditory events. In the theoretical version of AIM, it is assumed that the peripheral auditory system constructs an initial representation, or image, of sound as it arrives at the ear, in preparation for pattern analysis and source identification by more central modules of the auditory system. The computational version of AIM simulates the processing performed by the peripheral part of the auditory system and illustrate the form of the internal representation of sound as it proceeds through the image construction process to a representation that could, arguably, be considered the basis of our initial experience of the sound. Imagine the situation when a humming bird hovers behind a nearby bush or a sackbut plays a note in the next room. The listener experiences an auditory event in response to these sounds, even if they have never encountered a humming bird or a sackbut before, and even if they know nothing about the hum of a humming bird or the tones of a sackbut. This part of the hearing process does not require assistance from central processes to produce the initial representation of sound. The peripheral auditory system is largely characterized by data-driven, bottom-up processing that probably operates in the same way at night when we are asleep and most of our central processors are quiescent. The more central processes involved in source identification and association of the source with contextual and semantic knowledge concerning birds and musical instruments are not included in the computational model.

The concepts in the computational model are very similar to those in the theoretical model of peripheral processing, and so the computational model is also referred to as the auditory image model (AIM). The modules that simulate auditory preprocessing are, of course, imperfect but they can, nevertheless, illustrate the form of the space of auditory perception and the basic form of the figures and events that characterize the auditory image when presented with everyday sounds and communication tones. The computational version of AIM was used to produce the graphical representation of the internal representation of the tones, noises and transients shown in Figs 2.1.1 and 2.1.2 above. These simulated auditory images not only provide concrete illustrations of theoretical concepts like auditory figures and auditory events, they also provide a wealth of detail that can be used to assess the theoretical concepts and the auditory image approach to study of auditory perception.

The Space of Auditory Perception and the Auditory Image

The remainder of this introductory chapter focuses on the space of auditory perception and how the auditory system might generate the dimensions of the internal representation of sound. The construction of the initial auditory image is presented as a succession of four separate processes; in the auditory system, the processing is undoubtedly more complicated and the processes may be partly interleaved, but the order in which the dimensions of the perceptual space are generated is probably correct.

Frequency Analysis and the Tonotopic Dimension of the Auditory Image

The first stage of processing takes place in the cochlea, where incoming sound is subjected to a wavelet analysis (Daubechies, 1992; Reimann, 2006) by the basilar membrane and outer hair cells which are collectively known as the "basilar partition". This wavelet transform produces the tonotopic dimension of auditory processing and auditory perception. It is a quasi-logarithmic frequency axis and it forms the ordinate of the graphical representation of the auditory images presented in the different panels of Figures 2.1.1 and 2.1.2, and the videos of the melody and the word “leo.”

There are three parts to the argument for choosing the gammatone function as an auditory filter, one physiological, one psychological and one practical:

1 Physiological: The impulse response of the gammatone filter function provides an excellent fit to the impulse responses obtained from cats with the revcor technique (de Boer and de Jongh, 1978; Carney and Yin, 1988). The gammatone filter is defined in the time domain by its impulse response

gt(t) = a t(n-1) exp(-2πbt) cos(2π fot + Ø) (t>0)

The name gammatone comes from the fact that the envelope of the impulse response is the familiar gamma function from statistics, and the fine structure of the impulse response is a tone at the centre frequency of the filter, fo. (de Boer and de Jongh, 1978). The primary parameters of the gammatone (GT) filter are b and n: b largely determines the duration of the impulse response and thus,the bandwidth of the GT filter; n is the order of the filter and it largely determines the tuning, or Q, of the filter. Carney and Yin (1988) fitted the GT function to a large number of revcor impulse responses and showed that the fit was surprisingly good.

2. Psychological: The properties of frequency selectivity measured physiologically in the cochlea and those measured psychophysically in humans are converging. Firstly, the shape of the magnitude characteristic of the GT filter with order 4 is very similar to that of the roex(p) function commonly used to represent the magnitude characteristic of the human auditory filter (Schofield, 1985; Patterson and Moore, 1986; Patterson, Holdsworth, Nimmo-Smith and Rice, 1988). Secondly, the latest analysis by Glasberg and Moore (1990) indicates that the bandwidth of the filter corresponds to a fixed distance on the basilar membrane as suggested originally by Greenwood (1961). Specifically, Glasberg and Moore now recommend Equation 2 for the Equivalent Rectangular Bandwidth (ERB) of the human auditory filter.

ERB = 24.7(4.37fo/1000 + 1)

This is Greenwood's equation with constants derived from human filter-shape data. The traditional 'critical band' function of (Zwicker, 1961) overestimates the bandwidth at low centre frequencies.

When fo/b is large, as it is in the auditory case, the bandwidth of the GT filter is proportional to b, and the proportionality constant only depends on the filter order, n (Holdsworth, Nimmo-Smith, Patterson and Rice, 1988). When the order is 4, b is 1.019 ERB. The 3-dB bandwidth of the GT filter is 0.887 times the ERB.

Finally, with respect to phase, the GT is a minimum-phase filter and, although the phase characteristic of the human auditory filter is not known, it seems reasonable to assume that it will be close to minimum phase.

3. Practical: An analysis of the GT filter in the frequency domain (Holdsworth, Nimmo-Smith, Patterson and Rice, 1988) revealed that an nth-order GT filter can be approximated by a cascade of n, identical, first-order GT filters, and that the first-order GT filter has a recursive, digital implementation that is particularly efficient. For a given filter, the input wave is frequency shifted by -fo, low-pass filtered n times, and then frequency shifted back to fo.

The resultant gammatone auditory filterbank provides a good tradeoff between the accuracy with which it simulates cochlear filtering and the computational load it imposes for applications where the level of the stimulus is moderate. The response of a 49-channel gammatone filterbank to four cycles of the vowel in the word 'past' is presented in Figure 1. Each line shows the output of one filter and the surface defined by the set of lines is a simulation of basilar membrane motion as a function of time. At the top of the figure, where the filters are broad, the glottal pulses of the speech sound generate impulses responses in the filters. At the bottom of the figure, where the filters are narrow, the output is a harmonic of the glottal pulse rate. Resonances in the vocal tract determine the position and shape of the energy concentrations referred to as 'formants'.

The GT auditory filterbank is particularly appropriate for simulating the cochlear filtering of broadband sounds like speech and music when the sound level is in the broad middle range of hearing. The GT is a linear filter and the magnitude characteristic is approximately symmetric on a linear frequency scale. The auditory filter is roughly symmetric on the same scale when the sound level is moderate; however, at high levels the highpass skirt becomes shallower and the lowpass skirt becomes sharper. For broadband sounds, the effect of filter asymmetry is only noticeable at the very highest levels (over 85 dBA). For narrowband sounds, the precise details of the filter shape can become important. For example, when a tonal signal is presented with a narrowband masker some distance away in frequency, the accuracy of the simulation will deteriorate as the frequency separation increases.

The laterality dimension of the auditory image

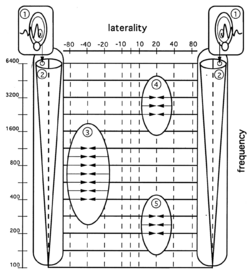

A schematic diagram of this analysis, and the arrival-time analysis that follows, are presented in Figure 2.1.3. In this example, the sound comes from a pair of sources, one 40 degrees to the right of the listener and the other 20 degrees to the left of the listener. The source on the right contains only mid-frequency components; the source on the left contains low and high frequency components but no mid-frequencies. At the top, the complex sound waves generated by the pair of sources are shown entering the left and right outer ears or pinnae. The outer ear and the middle ear concentrate sounds and increase our sensitivity to quiet sounds, but they do not analyse the sound of change the dimensionality of the representation as it passes through. The waves then proceed into the left and right inner ears, or cochleas, as shown by the two vertical arrows and the two vertical tapered tubes. The bold, dashed line down the middle of the tapered tube is intended to represent the basilar partition which runs down the middle of the cochlea and which performs the spectral analysis. In the cochlea, the components of the sound are sorted according to frequency and the results are set out along a quasi-logarithmic dimension of frequency as shown by the frequency scale beside the left cochlea. It is this process that creates the frequency dimension of our auditory images and the vertical dimension of the simulated images produced by AIM (e.g. the vertical dimension in Figures 2.1.1 and 2.1.2).

The range of sound intensities in the world around us is enormous. In order to fit the large dynamic range of sound into the restricted range of neural processing, the outer haircells in the cochlea apply compression to the basilar membrane motion, and it is this compressed motion that is actually transduced by the inner haircells and transmitted as neural spikes up the auditory nerve to the cochlear nucleus (CN) . The transduction process involves adaptation within frequency channels, and the processing in the CN involves lateral inhibition across frequency channels. Together they convert basilar membrane motion into a multi-channel neural activity pattern, or NAP, which is the next important representation of sound in AIM.

Although it is a necessary process, the global compression of the outer haircells reduces contrast in the representation of the sound and this is physically unavoidable. Functionally, the adaptation and lateral inhibition restore local contrast in the representation of the sound, and Chapter 2 describes how these processes are simulated in AIM. The mechanism is collectively referred to as two-dimensional, adaptive thresholding, or 2D-AT.

In AIM, the operation of each of the cochleas is simulated by a bank of auditory filters and a bank of 'neural' transducers. Together, they convert the two-dimensional wave arriving at one ear into a three dimensional simulation of the complex neural activity pattern produced by the cochlea in the auditory nerve in response to the input sound. The auditory filterbank, the scale of the frequency dimension, and the characteristics that the filtering imparts to the auditory image are described in Chapter 2.2 which explains how the cochlea performs the frequency analysis that creates the tonotopic dimension of the auditory image.

Laterality Analysis and the Location of the Auditory Image

The frequency channels of the two cochleas are aligned. In the third stage of auditory image construction, the pulses in each pair of channels with the same centre frequency are analysed in terms of their arrival time, across a horizontal array of laterality. This arrival-time comparison takes place in the brain stem, specifically, the superior olivary complex which is the next stage after the CN. The operation of the mechanism is illustrated in Figure 2.1.4 which shows the situation when there is one source 40 degrees to the right of the listener and another 20 degrees to the left of the listener. Sound form the source on the arrives at the right ear a fraction of a millisecond sooner than it does at the left ear, and this arrival-time difference is preserved in the spectral analysis process. After frequency analysis and neural transduction, activity in channels with the same centre frequency travels across a laterality line specific to that pair of channels and the information travels at the same, fixed rate. In the current example, for the sound on the right, the components from the right cochlea enter the laterality line sooner than those from the left cochlea and so they meet at a point to the left of the mid-point on the laterality line. Where they meet specifies the angle of the source relative to the head as indicated by the values across the top of the frequency-laterality plane. This coincidence detection process is applied on a channel-by-channel basis and the individual laterality markers are combined to determine the direction of the source in the horizontal plane surrounding the listener. The mechanism does not reveal the elevation of the source nor its distance from the listener; it just indicates the angle of the source relative to the head in the horizontal plane. Nevertheless, this laterality information is of considerable assistance in locating sources and segregating sources. The details are presented in Chapter 2.3 .

The view of spectral analysis and laterality analysis presented here is the standard view of auditory preprocessing for frequency and laterality. Together these two process produce two of the dimensions of auditory images as we experience them, and up to this level, AIM is a straightforward functional model of auditory processing that is relatively uncontroversial.

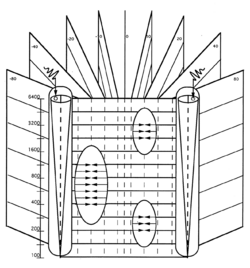

Time-Interval Analysis and the Stabilization of the Auditory Image

Now consider what we do and do not know about a sound as it occurs at a point on this frequency-laterality plane. We know that the sound contains energy at a specific frequency, say 1.0 kHz, and that the source of the sound is at a specific angle relative to the head, say 40 degrees to the right. What we do not know is whether the sound is regular or irregular; whether the source is a bassoon or a washing machine -- both of which could be at 40 degrees and have energy at 1 kHz. The system has information about the distribution of activity across frequency and the distribution of laterality values for those channels. But the spectrum of a bassoon note with one broad resonance could be quite similar to the distribution of noise from a washing machine and, at a distance, both would be point sources. So the prominent difference between the perceptions produced by a bassoon and a washing machine are not properly represented in the frequency-laterality plane. The crucial information in this case is temporal regularity; the temporal fine-structure of the filtered waves flowing from the cochlea is highly regular in the case of a bassoon note and highly irregular in the case of washing-machine noise. Information about fine-structure regularity is not available in the frequency-laterality plane. It is a representation of sound in the auditory system prior to temporal integration; as such it represents the instantaneous state of the sound and it does not include any representation of the variability of the sound over time.

The sophisticated processing of sound quality by humans indicates that peripheral auditory processing includes at least one further stage of processing; a stage in which the components of the sound are sorted, or filtered, according to the time intervals in the fine structure of the neural activity pattern flowing from the cochlea. It is as if the system set up a vertical array of dynamic histograms behind the frequency-laterality plane, one histogram for each frequency-laterality combination. When the sound is regular, the histogram contains many instances of a few time intervals; when the sound is irregular, the histogram contains a few instances of many time intervals.

The measurement of time intervals in the NAP and the preservation of time-interval differences in a histogram adds the final dimension to the auditory image and our space of space of auditory perception. This space is illustrated in Figure 4, where vertical planes are shown fanning out behind the frequency-laterality surface of Figure 3. Each vertical plane is made up of the time-interval histograms for all the channels associated with a particular laterality. For a point source, all of the information about the temporal regularity of the source appears in one these planes and that information is the Auditory Image of the source at that moment. For a tonal sound the time intervals are highly regular and there is an orderly relationship between the time-interval patterns in different channels, as illustrated by the auditory images of the click train and the vowel shown in Figures 1a and 2a. For noisy sounds the time intervals are highly irregular and any pattern that does form momentarily in one portion of the image is unrelated to activity in the rest of the image, as illustrated by the auditory images of the white noise and the fricative consonants shown in Figures 1b and 2b.

Strobed Temporal Integration

In AIM, the time-interval filtering process is performed by a new form of strobed temporal integration motivated originally by a contrast between the representation of periodic sounds at the output of the cochlea and the perceptions that we hear in response to these sounds. Consider, the microstructure of any single channel of the neural activity pattern produced by a periodic sound like a click train or a quasi-periodic sound like a vowel at the output of the cochlea. Since the sounds repeat, the microstructure consists of alternating bursts of activity and quiescence. The level of activity at the output of the cochlea must, at some level, correspond to the loudness of the sound, since an absence of activity corresponds to silence and the average level of activity increases with the intensity of the sound. This suggests that periodic sounds should give rise to the sensation of loudness fluctuations, since the activity level oscillates between two levels. But periodic sounds do not give rise to the sensation of loudness fluctuations. Indeed, quite the opposite, they produce the most auditory images that we hear. This observations indicate that some form of temporal integration is applied to the neural activity pattern after it leaves the cochlea and before our initial perception of the sound.

Until recently, it was assumed that simple temporal averaging could be used to perform the temporal integration and, indeed, if one averages over 10-20 cycles of a sound, the output will be relatively constant even when the input level is oscillating. However, the period of male vowels is on the order of 8 ms, and if the temporal duration of the moving average (its integration time) is long enough to produce stable output, it would smear out features in the fine-structure of the activity pattern; features that make a voice guttural or twangy and which help us distinguish speakers.

In AIM, a new form of temporal integration has been developed to stabilise the fast-flowing neural activity pattern without smearing the microstructure of activity patterns from tonal sounds. Briefly, a bank of delay lines is used to form a buffer store for the neural activity flowing from the cochlea; the pattern decays away as it flows, out to about 80 ms. Each channel has a strobe unit which monitors the instantaneous activity level and when it encounters a large peak it transfers the entire record in that channel of the buffer to the corresponding channel of a static image buffer, where the record is added, point for point, with whatever is already in that channel of the image buffer. Information in the image buffer decays exponentially with a half-life of about 40 ms. The multi-channel result of this strobed temporal integration process is the auditory image. For periodic and quasi-periodic sounds, the strobe mechanism rapidly matches the temporal integration period to the period of the sound and, much like a stroboscope, it produces a stable image of the repeating temporal pattern flowing up the auditory nerve.

In AIM, it is strobed temporal integration that performs the time-interval filtering and which creates the fifth dimension of the auditory image. It is a time-interval dimension rather than a time dimension; activity at time-interval, ti, means that there was recently activity in the neural pattern ti milliseconds before a large pulse which initiated temporal integration. The pulse that caused the temporal integration is added into the image at the time interval 0 ms. Neither the strobe pulse nor the pulse separated by ti ms are separately observable in the auditory image; they have been combined with previous pulses that bore the same temporal relationship in the neural activity pattern. The activity in the image is the decaying sum of all such recent events. As time passes the pattern does not flow left or right; when the sound comes on the image builds up rapidly in place and when the sound goes off the image fades away rapidly in place.

Strobed temporal integration is described in Chapter 2.4 where it is argued that STI completes the image construction process. It is also responsible for determining the basic figure-ground relationships in hearing, and the degree to which we perceive and do not perceive phase relationships in a sound.

The conception of auditory processing presented in this book focuses on snapshots of the space of auditory perception referred to as auditory images. It is argued that auditory processing divides naturally into the set of transforms required to construct the space of auditory perception, and the more central, specialized processors that analyse the figures and events that appear in auditory image space. The pivotal role of this specific internal representation of sound is why this conception of auditory processing is referred to as the Auditory Image Model.

Physiologically, it appears that the required array of time-interval histograms could be assembled by the neural machinery in the auditory pathway as early as the inferior colliculus (IC) which is the final nucleus in the mid-brain of mammals. There is a dramatic reduction in the upper limit of phase locking at this stage in the system which suggests that a code change occurs here and that it involves the dominant aspect of temporal integration. For purposes of discussion, it is argued that the structures and events that appear in the auditory image are the first internal representation of a sound of which we can be aware, and the representation of sound that forms the basis of all subsequent processing in the auditory brain. It is clear that auditory processing becomes massively parallel, and iterative in the region around primary auditory cortex, so AIM would appear to predict that the construction of the auditory image must be completed no later than PAC, and possibly in the medial geniculate body (MGB) between IC and PAC.

The Transition from Physiological to Functional modelling in AIM

It is important to note before proceeding, that our understanding of auditory preprocessing, in the sense of the physiological processes involved in constructing the internal representation of sound that supports our first experience of a sound, becomes less and less secure as the sound progresses through the stages required to construct the auditory image. At the start, in the cochlea, it is possible to make direct measurements of the frequency analysis performed at a specific place in the cochlea, which can be used to produce detailed models of the function of the cochlea as a whole. It is also possible to record the firing of single units with electrodes inserted into any of the nuclei of the auditory pathway, and in the nuclei prior to the IC, it is possible to posit reasonable hypotheses concerning the role of a unit in the sharpening or localization functions attributed to the early stages of image construction. At the level of the IC, however, all of the different neural paths converge and there is a sudden reduction in the upper limit of phase locking. At this point, hypotheses about the function of a unit become much more speculative and models of function rapidly shift from the interpretation of single unit recordings to considerations of what signal processing is required to convert the NAP observed in the early stages of the mid-brain into a functional representation intended to explain auditory experience. The shift from physiological to perceptual modelling occurs in AIM at the point where strobed temporal integration is used to stabilize the repeating patterns observed in the NAP. So the time-interval dimension that STI imparts to the space of auditory perception is currently hypothetical whereas the tonotopy dimension can be observed in the cochlea and auditory nuclei up to and including auditory cortex.

Physiologically, it appears that the array of time-interval histograms that form the auditory image could be assembled by the neural machinery in the auditory pathway as early as the inferior colliculus (IC). There is a dramatic reduction in the upper limit of phase locking at this stage in the system which suggests that a code change occurs here and that it involves temporal integration and the loss of some of the temporal information in the NAP. STI is a functional model of neural processing at this stage which suggests that it is the order information between successive cycles of the repeating pattern that is lost rather than temporal fine structure within the cycle, and it shows how this could be accomplished. It is argued that the figures and events that appear in the auditory image form the basis of all central processing in the auditory system, and it is clear that auditory processing becomes massively parallel in the region around primary auditory cortex, where there are as many feed-back as feed-forward connections. As a result, AIM would appear to predict that the construction of the auditory image is completed no later than PAC, and possibly in the medial geniculate body (MGB) between IC and PAC.