Acoustic events and Auditory Events

From CNBH Acoustic Scale Wiki

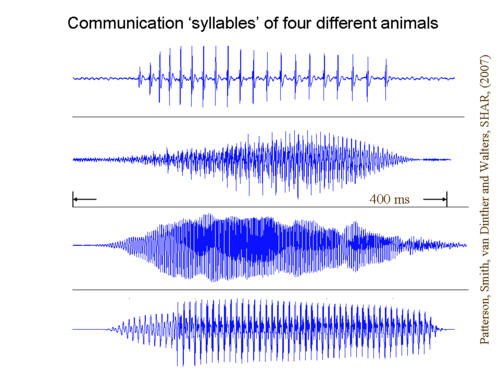

We are now ready to take on sounds and auditory events. Like Magritte’s painting of a ‘pipe,’ however, the perception of ‘Le mot pipe’ and the video of the auditory representation of ‘Le mot pipe’ are a little complicated, and so we will begin with a set of four, relatively simple, communication sounds – one from each of four animals. The acoustic waveforms of the sounds show that each is a compact, well defined, acoustic event, and when it is played on its own, it produces a compact well defined perception. (Play the sounds once or twice.)

Mongolar Drummer fish

|

Bullfrog

|

Macaque

|

Human saying /a/

|

The sounds are referred to as animal syllables since they convey the message of the species Patterson et al. (2007). For many people, three of the four sounds produce novel perceptions because they are from animals that they have not encountered before. Nevertheless, the auditory system automatically constructs a perception of the sound – a perception that we might refer to as an Auditory Event (Patterson et al., 1992). In other words, a sequence of largely data-driven processes coverts a coherent segment of sound arriving at the ear (an acoustic event) into an auditory perception that might reasonably be referred to as an Auditory Event.

The video Auditory response to the animal syllables shows the kind of processing that the auditory system might apply to these sounds to produce the Auditory Events that you hear.

Auditory response to the animal syllables Loading the player... Download HumanMacaqueFrogFish.mov [15.30 MB] |

The video was produced with the Auditory Image Model (AIM) (Patterson et al., 1995). AIM produces a dynamic, optical event in the form of a video, to go with each acoustic event (i.e., each syllable); the Visual Event that you see in response to the optical event comes on and goes off synchronously with the acoustic event and during the sustained part of the event, the video produces a relatively stable representation of the pitch and timbre of the sound. The video is simulating the neural processing that we think the auditory system performs in response to each syllable -- the processing that constructs the Auditory Image with the four Auditory Events that you hear in response to the four acoustic events on the sound track of the video. AIM applies the same series of transforms to all incoming sounds, independent of their meaning or the context, so AIM produces Auditory Images, Figures and Events, but not Auditory Objects, since there is no meaning or context added to the Figures and Events to elevate them to the status of Auditory Objects. This is the basic purpose of AIM, and it will be used to assist in describing the analogy between Visual Events and Auditory Events, and their relationships to the corresponding optical and acoustic events.

The notation presented above for the visual perception of the pipe event can be used to develop notation for the call of an animal that we do not immediately recognize. Specifically:

![I_A \bigl[ E_A \bigl\{ F_A^n(s_a) \bigr\} \bigr]

\quad\xleftarrow{\quad A \quad}\quad

s \bigl[ e_a \bigl\{ p_e^n \bigl( s_a \bigr) \bigr\} \bigr]](../images/math/d/a/2/da2eb15b0e7192f81610ec0f61592185.png)

In words, beginning with the external world, a scene, ![s [\ \cdot\ ]](../images/math/a/2/9/a29a9680df1d386d5bbf1b140c9f50c2.png) , which contains an acoustic event,

, which contains an acoustic event,  , which is a sequence of pulses (with their resonances),

, which is a sequence of pulses (with their resonances),  , emitted by an acoustic source, sa, in an external object. The auditory system converts the external scene,

, emitted by an acoustic source, sa, in an external object. The auditory system converts the external scene,  , into an auditory image,

, into an auditory image, ![I_A [\ \cdot\ ]](../images/math/d/9/1/d9112a87aa40dcbd7b5590e4d2cb603c.png) , which contains an auditory event,

, which contains an auditory event, ![E_A [\ \cdot\ ]](../images/math/1/8/c/18c0e18cc8a0246e83ff323be3aac24b.png) , which is a sequence of auditory figures,

, which is a sequence of auditory figures, ![F_A^n [\ \cdot\ ]](../images/math/8/f/6/8f66638c4a06b63077de4e8b74712215.png) , one for each pulse-resonance cycle emitted by the acoustic source, sa.

, one for each pulse-resonance cycle emitted by the acoustic source, sa.

References

- Patterson, R.D., Allerhand, M.H. and Giguère, C. (1995). “Time-domain modeling of peripheral auditory processing: A modular architecture and a software platform.” J. Acoust. Soc. Am., 98, p.1890-1894. [1]

- Patterson, R.D., Robinson, K., Holdsworth, J., McKeown, D., Zhang, C. and Allerhand, M. (1992). “Complex Sounds and Auditory Images”, in Auditory Physiology and Perception, Y Cazals L. Demany and Horner, K. editors (Pergamon Press, Oxford). [1]

- Patterson, R.D., van Dinther, R. and Irino, T. (2007). “The robustness of bio-acoustic communication and the role of normalization”, in Proceedings of the 19th International Congress on Acoustics, p.07-011. [1]